Graduate student Dian Li working with a robotic hand. (Credit: Melanie Gonick, MIT)

This AI Wristband Could Change How Humans Control Robots

In A Nutshell

- MIT researchers developed a wristband that uses ultrasound to track all 22 joint movements of the human hand in real time, with no cameras or finger-mounted sensors required.

- An AI algorithm processes the wrist images in milliseconds and translates hand movements into precise robotic hand control, demonstrated by playing piano and shooting a miniature basketball.

- In tests with eight participants, the wristband achieved significantly better accuracy than competing hands-free wearable systems and maintained performance a full week later without retraining.

- Current limitations include the need to train the AI separately for each individual user, but researchers believe the technology could one day help control prosthetic hands.

Move a finger, and a robotic hand across the room moves with it. Bend all four at once, and the machine follows along, note by note, key by key. A wristband developed by researchers at the Massachusetts Institute of Technology can now make that happen in real time, using ultrasound to decode detailed joint movements across the hand and relay those signals to a robotic limb.

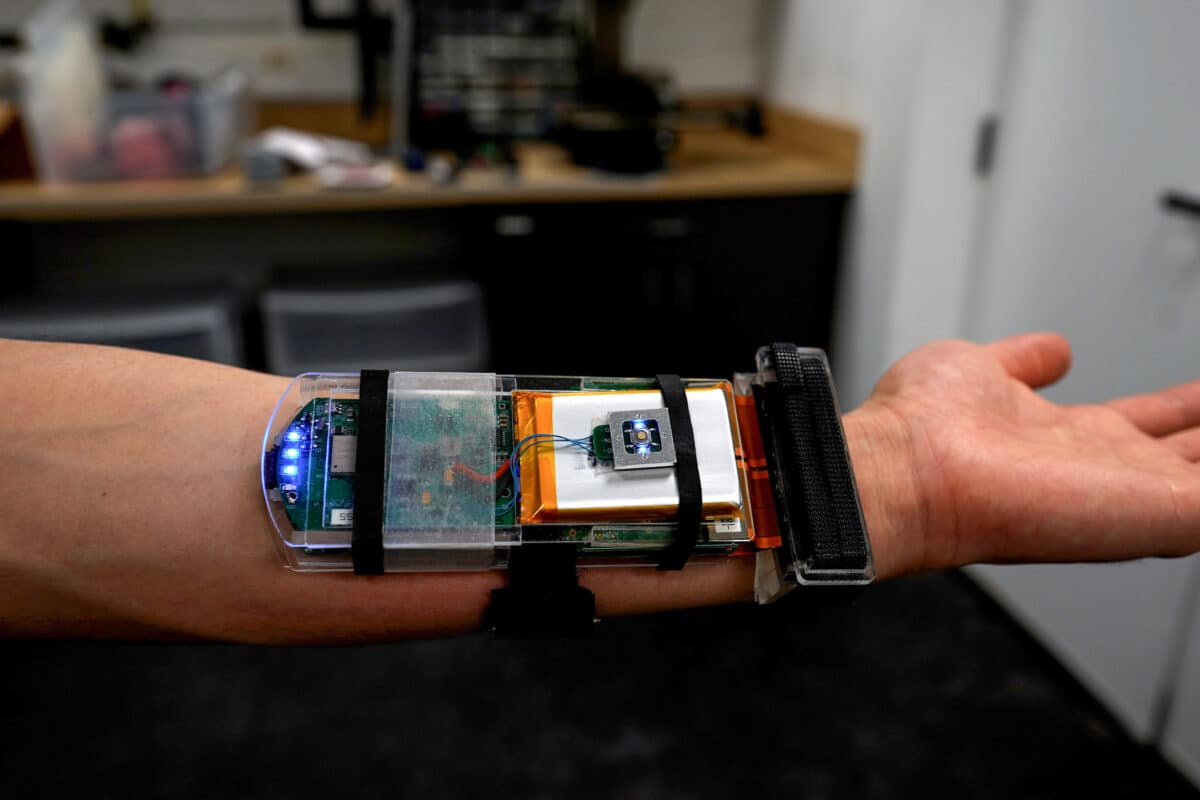

Published in the journal Nature Electronics, the research describes a wireless, AI-powered wristband that captures continuous ultrasound images of the tendons and muscles beneath the skin at the wrist. An artificial intelligence algorithm processes those images and predicts the joint angles and configuration of all five fingers and the palm simultaneously, covering 22 degrees of freedom. There are no cameras pointed at the hand involved, nor are there sensors strapped to fingers. Just a band on the wrist.

Robotic hands controlled by humans have long hit a stubborn wall: the human hand is remarkably dexterous, with every finger bending and rotating across multiple joints in fast, fluid, overlapping motions. Existing wearable systems that attempt to capture that complexity come up short in their own ways. Some require sensors strapped to individual finger joints, which restrict normal movement and sensation. Others rely on electrical signals picked up from arm muscles, a technique called electromyography, or EMG, but EMG systems often struggle to capture fine, continuous finger movements and typically recognize only a limited set of gestures or coarse configurations. MIT’s ultrasound wristband is built to handle all of that.

How the Ultrasound Wristband Reads Every Finger

Ultrasound, the same basic technology used to image a fetus during pregnancy, can also see inside the wrist. As tendons and muscles shift when fingers move, those shifts appear in real-time images. Researchers found that each of the 22 joint movements contributes to patterns the AI learns to interpret, allowing the system to reconstruct exactly what the hand is doing, degree by degree, as it happens.

A small ultrasound probe, roughly the size of a thick wristwatch band, sits strapped to the forearm and captures 30 images per second. Each image is fed into an AI model that processes it in roughly 6 to 9 milliseconds and predicts the angle and configuration of every joint. Total lag from movement to output, including wireless transmission, stays under 120 milliseconds, fast enough that the response feels nearly instantaneous. A soft hydrogel layer between the probe and the skin ensures stable contact during movement, and the whole system weighs 91.6 grams. Powered by a small rechargeable battery, it can run for over an hour, or considerably longer with a larger battery pack.

Ultrasound Wristband Accuracy Tested Across Eight Participants

Eight participants (five men and three women) wore the wristband while performing 69 distinct hand gestures: the numbers zero through nine, all 26 American Sign Language letters, and all 33 recognized human grasp types. A 10-camera motion-capture system tracked actual hand positions simultaneously as a reference standard.

On average, the wristband achieved an average error of about 3.78 degrees across all 22 joint angles. Competing hands-free wearable systems have shown error ranges of 7.35 to 22.58 degrees in published research and typically track only a small number of predefined motions, often fewer than eight. By both measures, the ultrasound wristband came out well ahead.

Participants returned one week later, having gone about their normal lives, and researchers ran the same AI model on new data collected that day. Accuracy held steady. Participants also removed and reattached the device at slightly different positions on the wrist between sessions, the kind of small variation that typically causes significant performance drops in EMG-based systems. Here, a feature built into the AI that accounts for how the band sits on the wrist kept performance largely stable.

Robotic Hand Control: From Basketball to Piano

In the robotics demonstrations, a participant wore the wristband and used it to control a programmable robotic hand in real time. First came a desktop basketball game. By bending the index finger to different angles, the wearer could precisely control how hard the robotic hand pressed a ball-launching pad. After a few attempts, an optimal angle was found and the robot sank the shot.

More demanding still, participants guided the robotic hand through a piano melody. Different fingers bent to different degrees at different times, and the robotic hand pressed the corresponding keys in sequence. Because the wristband requires no camera pointed at the hand, it kept working even when the hand was hidden from view, something camera-based systems cannot do.

What made all of this possible is a capability that existing wearables simply lack. An EMG armband often reduces movements to coarse or gesture-level signals rather than precise joint angles, and it cannot follow one finger independently from the one beside it. Ultrasound imaging reads the anatomy directly, and the AI translates what it sees into precise movement data across all 22 degrees of freedom at once.

For people who have lost a hand or part of an arm, that level of resolution points toward something significant. A device worn at the residual limb could, in principle, decode intended finger movements and send them to a prosthetic hand. Researchers are candid that reaching that goal will require considerably more work, but the principle behind it is already functioning in the lab.

Paper Notes

Limitations

Several meaningful constraints come with the current version of the system. Most significantly, the AI model must be trained separately for each user using a 10-camera motion-capture setup, a process that is time-consuming and impractical at scale. Cross-user generalization, where a single pre-trained model works for any wearer without individual calibration, has not yet been achieved, though researchers suggest large-scale data collection across diverse populations could eventually make it possible. A related limitation involves simultaneous changes in muscle shape caused by both joint position and muscle force, which the system cannot yet distinguish. Further miniaturization of the hardware will also be needed before the device is practical for everyday use. All eight participants were healthy adults without neurological disorders, leaving performance in clinical populations untested.

Funding and Disclosures

This work was supported by the Massachusetts Institute of Technology through the George N. Hatsopoulos Faculty Fellowship and Uncas and Helen Whitaker Professorship; the National Institutes of Health (grant numbers 1R01HL153857-01 and 1R01HL167947-01); the National Science Foundation (grant numbers 2430106 and EFMA-1935291); and the Department of Defense Congressionally Directed Medical Research Programs (grant number PR200524P1). Revision support was provided by the National Research Foundation of Singapore through the Singapore-MIT Alliance for Research and Technology. Lead author Gengxi Lu and corresponding author Xuanhe Zhao are listed as inventors on a patent application (US Provisional Application No. 63/721,387) covering the ultrasonic hand-tracking technology. Zhao also holds financial interests in SanaHeal, Magnendo, and Sonologi. All remaining authors declared no competing interests.

Publication Details

The study was authored by Gengxi Lu, SeongHyeon Kim, Xiaoyu Chen, Yushun Zeng, Dian Li, Shu Wang, Baoqiang Liu, Shucong Li, Runze Li, Bolei Deng, Junhang Zhang, Chen Gong, Anantha P. Chandrakasan, Qifa Zhou, and Xuanhe Zhao, representing the Massachusetts Institute of Technology (Cambridge, MA) and the University of Southern California (Los Angeles, CA). Published in Nature Electronics on March 25, 2026. Title: “Hand tracking using wearable wrist imaging.” DOI: https://doi.org/10.1038/s41928-026-01594-4.