Credit: elenabsl on Shutterstock

Your AI Brainstorming Partner And Everyone Else’s Might Be Giving The Same Answers

In A Nutshell

- Researchers tested 22 AI language models and 102 humans on creativity tasks and found AI responses were far more similar to each other than human responses were to one another.

- Switching between AI tools from different companies did not restore creative diversity: the homogeneity persisted across all models tested.

- Tweaking AI settings or prompting models to “be more creative” produced only minor improvements, and cranking randomness too high caused outputs to become incoherent.

- The researchers warn that widespread AI use for creative work could gradually narrow the range of ideas produced across society, with less creative individuals most at risk.

No matter which AI chatbot someone uses to brainstorm or draft new ideas, the outputs tend to resemble each other far more than human responses do. A new study published in PNAS Nexus tested 22 different AI language models from a range of companies and found they all tended to produce similar kinds of answers. Swap ChatGPT for Gemini, or Gemini for Llama, and the results barely change. The issue may not be the specific tool, but how current AI systems are built.

That matters. According to a 2024 Adobe survey cited in the study, more than half of Americans have already used generative AI for creative tasks like brainstorming, writing, or generating images, and an overwhelming majority believe these tools will make them more creative. Researchers from Duke University and the Technion Institute of Technology in Israel suggest that belief may be misplaced.

How Researchers Measured AI Creativity Against Human Thinking

To put both humans and AI through their creative paces, the team used three standard psychological tests. The Alternative Uses Task asks participants to list as many unusual uses as possible for everyday objects like a book, a fork, or a hammer. The Forward Flow task gives a single starting word and asks participants to write the next word that comes to mind, then the next, seeing how far a chain of thought drifts from where it began. The Divergent Association Task asks for 10 words that are as unrelated to each other as possible, with wider variety indicating stronger creative thinking.

On the human side, 102 participants were recruited through Prolific, an online platform commonly used to find research volunteers, and screened for English fluency. On the AI side, models included GPT-4o, Google Gemini 1.5, Meta’s Llama series, Mistral, Cohere Command R Plus, and Microsoft’s Phi models, among others. To keep the comparison fair, the main statistical tests drew on one representative from each distinct AI developer rather than stacking the sample with multiple versions of the same model.

Every response was measured two ways: how original it looked on its own, and how different it looked compared to everyone else’s answers, based on how far apart the ideas were in meaning. That second measurement is where the real story emerged.

AI Creativity Scores Look Good Until You Zoom Out

AI held its own on individual creativity scores, matching or slightly outperforming humans on two of the three tasks. At first glance, that sounds like a point in AI’s favor. But individual scores only tell part of the story.

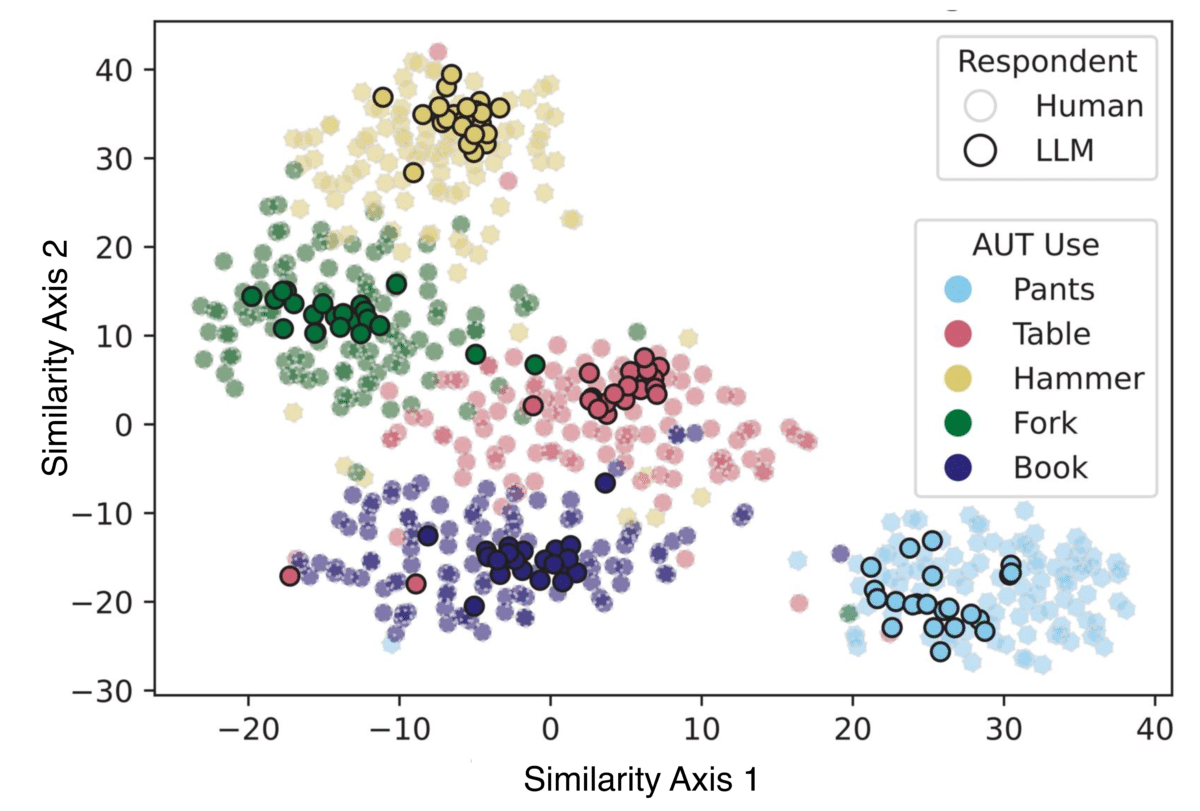

When researchers looked at how similar all the AI responses were to each other, the picture changed quickly. Across all three tests, AI answers clustered tightly together while human answers spread out across a much wider range. A visualization of the data made this stark: plot the responses on a map of meaning, and human answers scatter broadly while AI answers bunch in the same corner, regardless of which model produced them. Part of the explanation is straightforward. AI models shared far more words in common with each other than human participants did, a pattern that held across every single task.

Why Switching Chatbots Won’t Restore Creative Diversity

One obvious counterargument is that homogeneity might stem from over-relying on a single AI tool, and that mixing in different models would restore variety. The study tested that directly. Models from different companies still showed similar clustering. Models within the same development family, like Meta’s various Llama versions, were even more tightly bunched. Switching brands didn’t lead to more diverse outputs.

Researchers also tried adjusting the AI’s internal settings. Raising the “temperature,” a dial that makes responses more unpredictable, did push variety higher. However, at settings high enough to meaningfully close the gap with humans, the AI’s outputs fell apart, starting coherently before dissolving into gibberish. Prompting the AI to be more creative, including one attempt that offered the model a $200 prize for the highest originality score, barely moved the needle.

What AI Homogeneity Means for Human Creativity

Wenger and Kenett put the broader concern plainly: “Humans who rely on LLMs (AI language models) as creative partners would find their creative outputs remarkably similar to those of other LLM users regardless of the model used, resulting in a collective narrowing of creativity.”

Research cited in the study also points to who is most at risk. Less creative individuals tend to accept AI suggestions rather than push past them toward something more original. More creative people tend to use AI differently, writing richer and more varied prompts that yield more distinctive results. Heavy AI reliance may not level the creative playing field so much as tilt it further.

The paper also raises a longer-term concern. Some research suggests AI models may be converging toward similar internal structures over time, which could mean the homogeneity found here deepens rather than resolves. The researchers note that users of AI creative tools “may be self-limited from being exhibiting [sic] the divergent creativity that defined well-recognized artistic geniuses like Tolkien, Mozart, and Picasso because their LLM ‘creative’ partners may collectively drive them toward a mean.”

Genuine creativity has always depended on people thinking differently from one another. On that front, today’s AI models, whatever the brand, appear to be pulling in the wrong direction.

Paper Notes

Limitations

This study measured creativity along a single dimension, originality as gauged by semantic distance between responses, and did not assess other recognized aspects of creative thinking such as fluency, flexibility, or elaboration. Only publicly accessible, commercially hosted AI models were tested; locally run or unaligned versions may behave differently. Human participants were recruited online, and while the researchers used multiple safeguards to screen out bots and inattentive respondents, some risk remains that participants used AI tools themselves when completing the tasks. Applying human psychological tests to AI systems also remains a debated practice, though the researchers argue that measuring response variability rather than inherent creativity makes the comparison defensible.

Funding and Disclosures

This work was funded by Duke University. The authors declare no competing interests.

Publication Details

Authors Emily Wenger (Department of Electrical and Computer Engineering, Duke University) and Yoed N. Kenett (Faculty of Data and Decision Sciences, Technion, Israel Institute of Technology) conducted the research. The paper, titled “Large language models are homogeneously creative,” was published in PNAS Nexus, Volume 5, 2026, article pgag042. DOI: https://doi.org/10.1093/pnasnexus/pgag042. Advance access publication date: March 24, 2026. A preprint is available at https://arxiv.org/pdf/2501.19361.