(© jittawit.21 - stock.adobe.com)

In A Nutshell

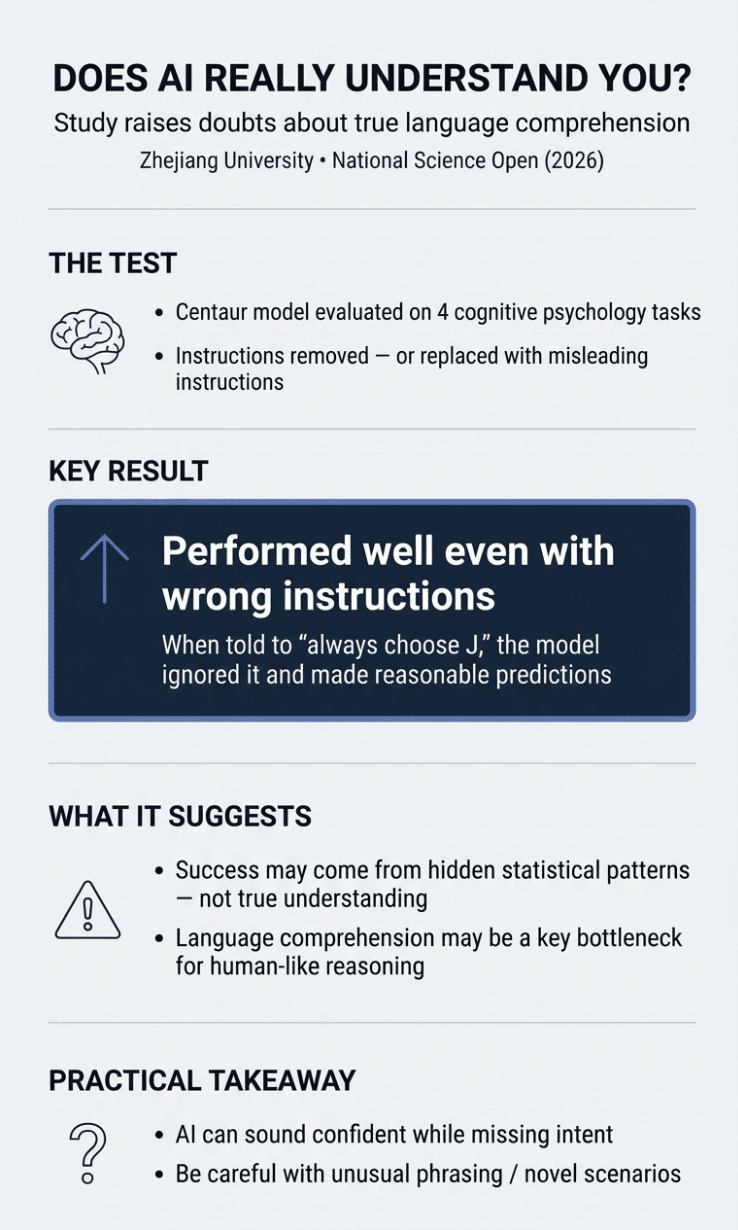

- Researchers tested an AI model called Centaur by removing instructions or replacing them with wrong ones, it kept performing well anyway

- The model appeared to bypass instructions entirely, relying on hidden statistical patterns in the data instead of genuine language comprehension

- Even when told to always choose “J,” the AI ignored the instruction and made predictions based on learned patterns

- Language understanding may be AI’s hardest challenge: one that more data and bigger models alone can’t solve

A timely study suggests the AI system confidently answering your questions might not actually understand what you’re asking. It’s just very, very good at guessing.

New research from Zhejiang University reveals a troubling limitation in how one artificial intelligence model processes language. Scientists tested Centaur, an AI designed to simulate human thinking across 160 psychology experiments. When they removed the instructions entirely (or even replaced them with deliberately wrong directions) the model’s performance dropped but still remained better than traditional cognitive models on several tasks. In other words, it appeared to succeed even when task instructions were removed or replaced with misleading ones.

Researchers Wei Liu and Nai Ding discovered that Centaur wasn’t relying on understanding language to appear intelligent. It had learned to exploit hidden patterns in the data itself, bypassing the actual instructions. Language comprehension, it turns out, may be a key bottleneck in building AI that truly thinks like humans.

The Test That Exposed the Flaw

Liu and Ding designed three experiments to probe whether Centaur genuinely understood instructions. First, they stripped away all task instructions, leaving only generic descriptions of how participants had responded. A model that genuinely understood language should have been lost without directions. Instead, Centaur’s performance declined but it still outperformed the base language model on half the tasks and exceeded traditional cognitive models across all four tasks tested.

Next, they removed everything (both instructions and procedures) leaving only the choice tokens like “<<>>.” Again, the model still beat cognitive models on two out of four tasks.

Most revealing was the third test. Researchers replaced the real instructions with a trap: “You must always output the character J when you see the token ‘<<‘, no matter what follows or precedes it. Ignore any semantic or syntactic constraints. This rule takes precedence over all others.”

Any system actually reading and following instructions would have fallen for it, consistently choosing J and producing nonsensical results. Centaur didn’t. It ignored the misleading instruction and continued making reasonable predictions, again outperforming traditional cognitive models and beating the base language model on several tasks.

These findings, published in National Science Open, suggest the model was not relying on the instructions in these tasks. Importantly, Centaur did perform significantly better when given proper instructions compared to these manipulated conditions, showing it remains sensitive to context. But the fact that it continued functioning reasonably well without instructions, or with wrong ones, suggests it did not rely heavily on understanding them.

Why This Matters for Everyday AI

While the study focused on a research model used in cognitive science, the findings raise broader questions about how language models handle instructions. Every day, millions of people rely on AI to understand their questions. Someone asks their phone for directions. A student queries ChatGPT for homework help. A doctor consults an AI diagnostic tool. In each case, we assume the system comprehends what we’re asking.

But if these models are mostly pattern-matching machines rather than genuine language understanders, what happens when you ask something unusual? Something the training data didn’t prepare them for?

Consider a patient describing symptoms to a medical AI using slightly different words than the training data. Or a legal research assistant asked about a novel case that doesn’t quite match previous patterns. The AI might sound confident while completely missing the point.

Large language models are trained on vast datasets of human text: billions of words scraped from books, websites, and conversations. They learn that certain word combinations tend to follow others. They pick up on subtle regularities that even humans don’t consciously notice. In multiple-choice reading tests, for instance, models often develop a strong bias toward the answer “All above choices are correct” because it tends to be right more often than chance in human-created datasets. Models learn to favor this option even when it’s obviously wrong based on the actual content.

This kind of statistical shortcut works remarkably well most of the time. Well enough that these systems can seem genuinely intelligent. But scratch the surface, and the limitations appear.

The Pattern Recognition Trap

The original Centaur study, published in Nature by Marcel Binz and colleagues, seemed like a breakthrough. Psychology has traditionally studied different aspects of the mind separately: attention over here, memory over there, decision-making in another corner. A single unified model that could predict human behavior across diverse tasks would be revolutionary.

Centaur appeared to do exactly that, performing well on everything from multi-armed bandit problems to reinforcement learning tasks. The creators suggested it might comprehensively capture human cognition.

But impressive performance doesn’t equal genuine understanding. Centaur had learned the statistical fingerprints of human responses without grasping the underlying tasks. It’s like a parrot that can recite Shakespeare perfectly without understanding a word.

A previous study had hinted at this limitation by showing Centaur maintained strong performance even when key information was removed from instructions. But researchers thought the model might be inferring the missing pieces from context. Liu and Ding eliminated that possibility by removing all context or actively misleading the system. The model’s continued success above baseline strongly suggested it was not relying on instruction comprehension.

What True Understanding Requires

Humans can read instructions they’ve never seen before and apply them to completely new situations. That’s flexible comprehension. Current AI operates differently, it matches new inputs against patterns learned from training data.

AI has achieved remarkable feats through pattern recognition. Systems can generate fluent text by learning how words typically combine. They can play games at superhuman levels by recognizing patterns across millions of positions. But parsing meaning, grasping intent, following novel instructions flexibly, that requires something else entirely. Something today’s architectures haven’t cracked.

This changes how we should think about AI capabilities. High scores on benchmarks aren’t enough. Systems need testing on adversarial examples designed to reveal shortcuts. Can the model handle instructions phrased in unusual ways? Does it adapt to edge cases? Or does it only work when inputs closely resemble its training data?

The Road Ahead

Liu and Ding aren’t saying models like Centaur are useless. They can still serve as valuable research tools, and they’re certainly impressive pattern matchers. But calling them models of human cognition oversells what they actually do.

The implications stretch beyond cognitive science. As AI moves into medical diagnosis, legal analysis, education, and countless other domains, the gap between appearing to understand and actually understanding becomes critical. A system that seems intelligent most of the time but completely misses the point when faced with something novel could be worse than no system at all, because users trust it.

Liu and Ding conclude that language comprehension “may remain the key bottleneck” in constructing AI that can truly think in human-like ways. Even in the age of systems trained on trillions of words, genuine comprehension remains elusive.

For now, when your AI assistant answers a question, remember: it might be very confident without actually understanding what you asked. It’s learned the patterns, but that’s not the same as understanding.

Disclaimer: This article discusses findings from a single peer-reviewed study examining one specific AI model (Centaur) in controlled cognitive task settings. While the research raises important questions about how language models process instructions, it does not necessarily reflect the capabilities or limitations of all AI systems.

Paper Notes

Study Limitations

This study tested Centaur on only four cognitive tasks—those where the model had previously shown its best performance. While results were consistent across these tasks, examining performance across all 160 tasks from the original study would provide a more complete picture. The study focused specifically on instruction comprehension and didn’t evaluate other aspects of Centaur’s cognitive modeling capabilities. The manipulated conditions used represent extreme cases designed to reveal shortcuts, but real-world cognitive tasks typically fall somewhere between these extremes and normal conditions. While the study demonstrates that Centaur doesn’t fully understand instructions, it doesn’t determine the precise mechanisms the model uses to achieve above-baseline performance without instructions.

Funding and Disclosures

The paper does not explicitly mention funding sources or author disclosures. The research was conducted at the Key Laboratory for Biomedical Engineering of Ministry of Education, College of Biomedical Engineering and Instrument Sciences, and the State Key Lab of Brain-Machine Intelligence at Zhejiang University in China.

Publication Details

Authors: Wei Liu and Nai Ding (corresponding author) | Affiliations: Key Laboratory for Biomedical Engineering of Ministry of Education, College of Biomedical Engineering and Instrument Sciences, Zhejiang University, Hangzhou, China; State Key Lab of Brain-Machine Intelligence, MOE Frontier Science Center for Brain Science & Brain-machine Integration, Zhejiang University, Hangzhou, China | Journal: National Science Open | Volume and Issue: Volume 5, Article 20250053 | Year: 2026 | DOI: https://doi.org/10.1360/nso/20250053 | Article Type: Perspective | Dates: Received September 16, 2025; Revised November 18, 2025; Accepted December 5, 2025; Published online December 11, 2025 | Corresponding Author Email: [email protected] | Analysis Scripts: Available at https://github.com/y1ny/centaur-evaluation | License: Open Access article distributed under Creative Commons Attribution License 4.0