3D-rendering of quantum entanglement. (Image by Vink Fan on Shutterstock)

Quantum Entanglement Gets Buried in Noise. This Device Can Recover It.

In A Nutshell

- Researchers built a device that recovers entangled quantum states after noise has already degraded them, using standard fiber-optic telecom hardware.

- In tests, a key measure of quantum state quality called fidelity was restored from 0.62 to 0.86, approaching noiseless performance of above 0.9.

- A signal quality metric called the coincidence-to-accidental ratio improved roughly tenfold, and quantum entanglement visibility improved by up to 49.3% under noisy conditions.

- The technique requires no specialized lab infrastructure and could potentially be integrated into existing fiber-optic networks, moving quantum communication closer to real-world deployment.

Every major quantum technology, from unhackable communications to computers that could outrun anything built today, runs on quantum entanglement. It’s also absurdly fragile. Too much background noise, and the entangled quantum states these technologies depend on collapse completely, leaving nothing useful behind. Now a team of researchers has built a device that reverses that process, reviving entangled states buried in noise, using standard hardware from the telecommunications industry.

A study published in Science Advances by researchers at the Institut National de la Recherche Scientifique (INRS) in Canada and several collaborating institutions describes a technique called quantum coherent energy redistribution. Rather than trying to protect quantum signals from noise before it strikes, the technique recovers quantum properties after corruption has already occurred. Fidelity measures how closely a quantum state matches its ideal, uncorrupted form, and in tests, the researchers restored it from 0.62 back up to 0.86, approaching the noiseless performance of above 0.9. In other words, a quantum state that had been largely degraded by noise recovered much of its original quality.

All of it ran on commercially available fiber-optic hardware operating at the same infrared wavelengths used by ordinary internet infrastructure, without requiring the ultra-clean conditions typical of many lab setups. A technology that has historically required near-perfect environments may be inching toward the real world.

Why Quantum Entanglement Keeps Getting Killed

Quantum entanglement links two particles so that measuring one instantly tells you something about the other, regardless of the distance between them. That link is the foundation of quantum cryptography and quantum computing, and it shatters easily.

Regular digital signals can be boosted when they weaken, which is what repeaters do in phone and internet networks. Quantum signals can’t be copied or amplified without destroying the information they carry. That’s a basic rule of quantum physics, not an engineering problem to be solved. So when noise creeps in, the only option is to remove it. Current methods rely on narrow optical filters that block unwanted photons, but those filters have a hard limit: when a quantum signal occupies the same narrow frequency range as the noise, the filter can’t tell them apart. Other approaches require advance knowledge of exactly when the photons will arrive and at what frequency, details that often aren’t available outside a controlled lab.

Meanwhile, the environments where quantum networks actually need to operate are hostile by nature. Real fiber-optic cables carry enormous volumes of internet traffic that generates its own interference. Satellite quantum links are largely restricted to nighttime to avoid sunlight. None of this is compatible with the clean, quiet conditions quantum signals currently require.

INRS)

Resurrecting Quantum Entanglement With Off-the-Shelf Hardware

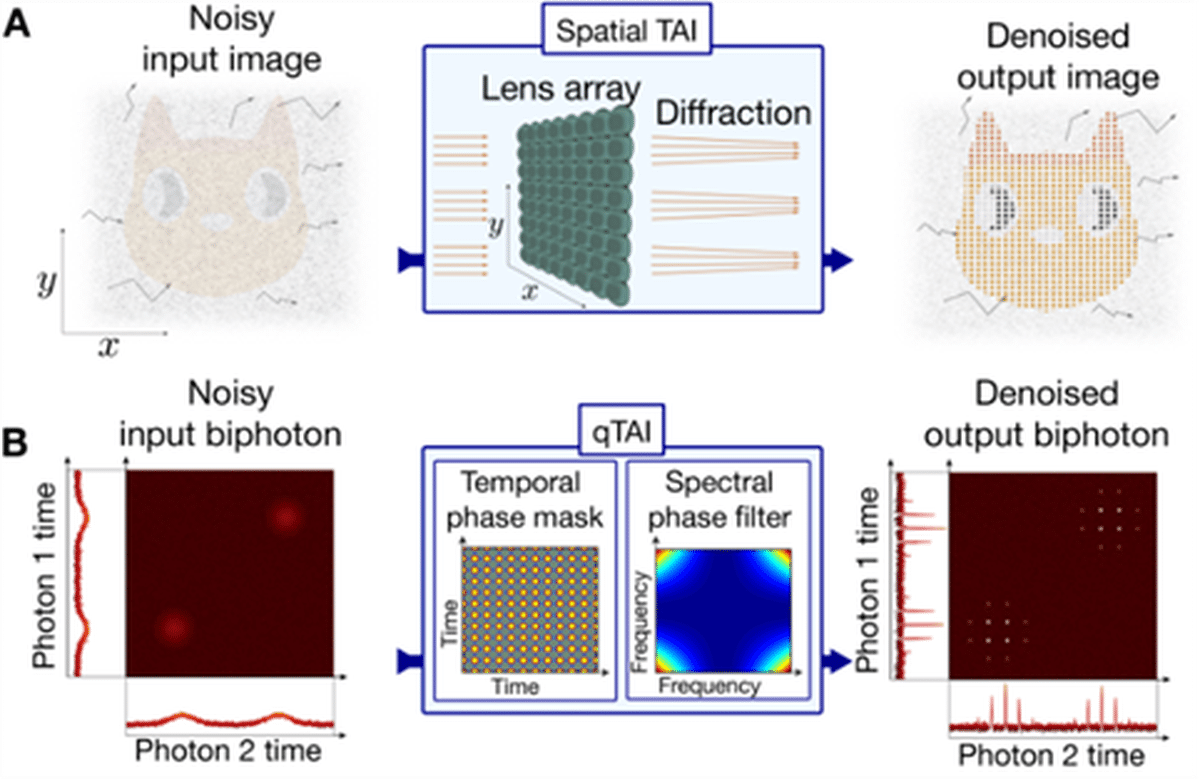

Researchers solved the problem with an approach borrowed from classical optics. When a lens array focuses light, coherent rays, the ones carrying real signal, converge into tight, bright spots, while scattered background light stays diffuse. Reading only the bright spots captures the signal cleanly, with the noise left behind.

Translating that principle into the time domain, the team built a device they call a quantum Talbot array illuminator, or qTAI. Two standard fiber-optic components give entangled photons a precise timing signature that causes the real signal to bunch into brief, sharp pulses. Background noise, which lacks that signature, stays spread out. By reading only what arrives during those pulses, the system recovers the quantum signal while discarding most of the noise, without directly measuring or copying the quantum state itself.

Because all wavelength channels are processed simultaneously, no precise tuning of filters to each channel is required, a practical advantage that narrowband filtering approaches can’t match in real deployments. The system also does not require precise timing information about when photons will arrive or how long the quantum state lasts.

This principle can be applied in time as well.

Just as we process a spatial image with optical elements, we can use a temporal equivalent of this imaging system to reorganize photon correlations over time. The correlations are redistributed into a series of distinct temporal points, making them much easier to analyze despite noise. (Credit: INRS)

Measuring the Recovery of Quantum Entanglement

Across multiple noise levels and test conditions, the performance gains were large. One standard quality measure, the coincidence-to-accidental ratio or CAR, compares genuine correlated photon detections against random noise hits. In a moderately noisy scenario, the CAR before processing sat at 2.2. After the qTAI, it reached 21.3, a roughly tenfold jump.

For quantum entanglement to be formally verified, a measurement called quantum interference visibility must clear 71%, a threshold rooted in a foundational physics test. Under rising noise, the untreated quantum state’s visibility fell rapidly below that line. After qTAI processing, entanglement held even at noise levels that had previously suppressed detectable entanglement entirely, with visibility improving by up to 49.3% in some conditions. As for fidelity, the headline result from earlier in this piece, it recovered from 0.62 to 0.86, within reach of the noiseless performance of above 0.9.

As a further test, the team demonstrated a simplified quantum key distribution link, the type of communication where eavesdropping is physically detectable by design. Where the unprocessed signal failed under noise, the qTAI kept the link running.

Practical limits remain. The technique works best when noise occupies a broader frequency range than the quantum signal itself. Noise that closely mimics the signal’s frequency profile can’t be separated this way. Current hardware also introduces some signal loss, though the researchers calculate that optimized off-the-shelf components could cut it significantly.

Quantum networks have promised a great deal for a long time while remaining stubbornly lab-bound. A device that recovers entangled states from noise using hardware already wired into the world’s telecommunications infrastructure makes that promise a little harder to dismiss.

Paper Notes

Limitations

Several constraints apply to the qTAI system as demonstrated. Current performance is limited in part by the timing jitter of the superconducting nanowire single-photon detectors (SNSPDs) used, which broadens output pulses and degrades the effective noise mitigation bandwidth from the theoretical optimum of 403.3 MHz to approximately 1.16 GHz, making the filtering less selective than it could be. The technique works best when background noise has a broader frequency spread than the quantum signal; noise sharing the same time-frequency characteristics as the signal, such as multiphoton events generated from the same pump pulse in the same resonance, undergoes an identical focusing process and cannot be separated out. The current hardware introduces roughly 5.3 dB of insertion loss, which the authors note could be reduced to approximately 2 dB with optimized components such as lower-loss electro-optic modulators and fiber Bragg gratings.

Funding and Disclosures

Funding was provided by the Natural Sciences and Engineering Research Council of Canada (NSERC), through grants including AQUA ALLRP 587602-23, QuEnSi ALLRP 578468-22, Consortium on Integrated Quantum Photonics with Ferroelectric Materials ALLRP 587352-23, HyperSpace ALLRP 569583-21, and Equipps ALLRP 592587-23, as well as by the Fonds de recherche du Québec (FRQNT) under project AdéQuATS FRQNT 328872. Lead author Benjamin Crockett received an NSERC Postgraduate Scholarship-Doctoral. Competing interests are disclosed: Crockett and co-author José Azaña are named inventors on a related U.S. patent application (no. US11271660B2) held by INRS. Co-authors Piotr Roztocki and Robin Helsten are involved in developing entangled photon source and fiber interferometer products at Ki3 Photonics Technologies. All other authors declare no competing interests.

Publication Details

The study, “Quantum state revival via coherent energy redistribution,” was authored by Benjamin Crockett, Nicola Montaut, James van Howe, Piotr Roztocki, Yang Liu, Robin Helsten, Wei Zhao, Roberto Morandotti, and José Azaña, representing institutions including INRS-EMT (Canada), the University of British Columbia, Augustana College, Ki3 Photonics Technologies, and the Chinese Academy of Sciences. It was published on January 30, 2026, in Science Advances, Volume 12, article eady8981. DOI: 10.1126/sciadv.ady8981.