After more than a decade of research, scientists evaluate implanted brain-decoding technology that helps people hear one voice among many. (Credit: Matteo Farinella/Columbia's Zuckerman Institute)

New Brain-Reading Tech Could Solve the Biggest Problem With Hearing Aids

In A Nutshell

- Researchers built a system that reads brain signals in real time to identify which voice a listener is focused on, then automatically turns it up.

- In a small early lab study, four participants found the target voice easier to understand, needed less mental effort to follow it, and preferred the brain-controlled audio over unaided listening.

- The system also tracked attention switches, whether instructed or self-initiated, adjusting the volume within seconds.

- A separate group of 40 people with hearing loss showed even greater improvements in speech understanding when exposed to the brain-shaped audio, suggesting the technology could matter most to those who need it most.

A crowded dinner party is a minefield for anyone with hearing loss. Voices stack on top of each other, background noise fills every gap, and conventional hearing aids make things worse by turning up everything at once. Now, in a small early study, researchers have built a system that reads brain signals in real time to figure out which voice a listener is focused on, then automatically amplifies that voice. In the lab, it worked.

The system was tested on four patients already undergoing brain-monitoring procedures for epilepsy treatment. It decoded listeners’ attention from electrical signals recorded directly from the surface of the brain. When the system switched on, people found the target voice significantly easier to understand, reported needing less mental effort to follow along, and overwhelmingly said they preferred listening with the system active. The research was published in Nature Neuroscience.

Researchers also played the brain-adjusted audio to a separate group of 40 people with hearing loss, none of whom were part of the brain-monitoring experiment, and those listeners showed even greater improvements in understanding speech than the original participants did. The biggest gains appeared among listeners with more room to improve, which is exactly the group future versions of the technology would hope to help.

Brain-Controlled Hearing Works by Reading Attention in Real Time

When a person pays attention to a specific voice, that focus leaves a detectable imprint in brain activity. The brain essentially echoes the rhythm and pattern of the sound it’s tracking. Researchers have known for about a decade that this echo exists, but no one had conclusively shown that detecting it in real time could actually help someone hear better.

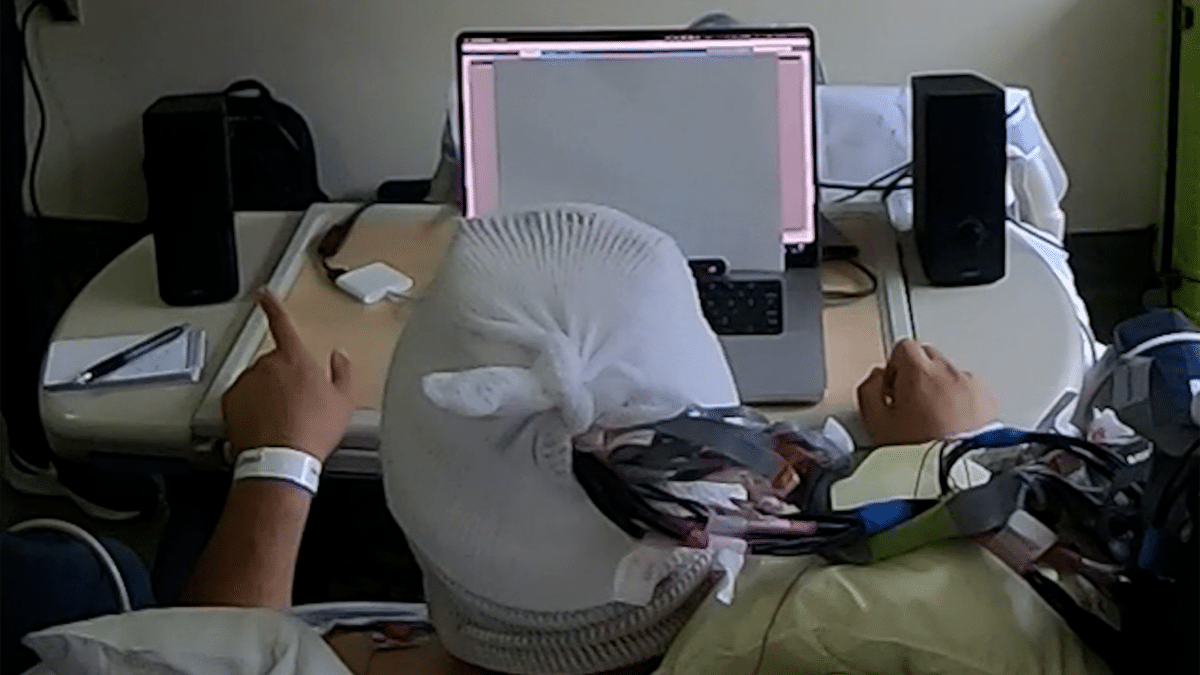

To test whether it could, the researchers recruited four patients already having electrodes placed directly on the surface of their brains as part of standard medical care for epilepsy. Those electrodes record brain signals with exceptional clarity, far cleaner than scalp sensors, giving researchers the signal quality needed to decode attention on the fly. Participants listened to two overlapping conversations playing simultaneously against a backdrop of crowd noise or multi-talker babble, and pressed a button when they heard a repeated word in the conversation they were tracking.

Vishal Choudhari / Mesgarani lab / Columbia’s Zuckerman Institute)

A Personalized Brain Decoder Drove the Volume Adjustments

Before the real-time test began, each participant completed a training phase. The system learned to reconstruct the rhythmic rise-and-fall pattern of the attended voice from that person’s brain signals, drawing on both slow electrical waves and faster, higher-frequency fluctuations. Once trained, it continuously compared its prediction against the two competing audio streams and amplified whichever conversation’s pattern most closely matched what the brain was tracking. Volume adjustments happened gradually through a five-step process to avoid jarring shifts. All four participants decoded attention well above chance, with average accuracy ranging from roughly 72% to just over 90%.

Brain-Guided Amplification Cut Listening Effort and Boosted Comprehension

In the first experiment, participants listened with the system off, the target voice set six decibels quieter than the competing one. When the system turned on, the brain-controlled amplification delivered an average boost of 12 decibels. Every participant preferred listening with the system active, with preference rates ranging from 75% to 95%. Two of the four participants also had their pupil size measured, a well-established indirect indicator of mental strain, and both showed significantly smaller pupils when the system was on, suggesting their brains were working less hard to follow the conversation.

A second experiment tested whether the system could keep up when a listener deliberately switched attention mid-conversation. After a visual cue told participants to shift focus, the system tracked the switch in an average of 5.1 seconds. A third experiment let participants switch freely with no external cue, and the system followed those self-driven shifts as well. Researchers also ran a control condition in which they flipped the system’s behavior, amplifying the wrong voice without telling participants. Listeners immediately noticed something was wrong, confirming the benefit was genuinely tied to correct brain decoding.

Hearing Loss Patients Showed the Largest Gains from Brain-Shaped Audio

Perhaps the most telling result came from the 40 people with hearing loss who took part in a separate validation study. These listeners were not using a brain-controlled device themselves. They were judging audio already adjusted in real time using the brain signals of the original participants. Compared to normal-hearing listeners, people with hearing loss showed a significantly larger gain in speech understanding when the system was active, and they strongly preferred the brain-adjusted audio.

The authors are candid about the distance between this proof of concept and a practical consumer device. Electrodes placed directly on the brain’s surface are not an option for most people, and the small sample, lab conditions, and 5.1-second switching delay all represent real gaps. As the paper notes, disabling hearing loss affects more than 430 million people worldwide, making the potential reach enormous if less invasive sensing can be made to work just as well.

For decades, the core idea behind brain-guided hearing has been theoretically appealing but practically unproven. This study narrows that gap, showing for the first time that decoding what a person’s brain is listening to can translate into a measurably better experience.

Paper Notes

Limitations

The researchers acknowledge several important constraints. The study involved only four participants, all already undergoing clinical brain-monitoring procedures for epilepsy, a highly specific group that may not represent the broader population. The intracranial electrodes provided exceptional signal quality but are invasive and unsuitable for consumer use. The authors explicitly describe this setup as a performance benchmark rather than a deployable solution.

The fixed structure of the first experiment, in which the system was always off first and then turned on, creates a potential confound: some improvement in the second segment could reflect listeners simply adapting to the task over time rather than the brain-driven amplification alone. The researchers excluded the first 10 seconds of each trial to account for the known warm-up period of auditory attention, and they argue the magnitude of improvement points to the system as the primary driver, but they acknowledge the limitation.

The study also used separated audio sources rather than the fully mixed audio present in real-world environments. A control analysis suggested performance was largely preserved when algorithmically separated sources were used instead, but the authors note that real-world conditions, including reverberant spaces and scenes with many simultaneous talkers, present additional challenges not fully addressed here. The average attention-switching time of 5.1 seconds reflects specific design choices built into the current system and is not a fundamental ceiling on what the technology could achieve.

Funding and Disclosures

The study was supported by NIH-NIDCD grants R01DC014279 and DC018805, with additional support from the Marie-Josée and Henry R. Kravis Foundation. The authors declare no competing interests.

Publication Details

Paper Title: Real-time brain-controlled selective hearing enhances speech perception in multi-talker environments | Authors: Vishal Choudhari, Maximilian Nentwich, Sarah Johnson, Jose L. Herrero, Stephan Bickel, Ashesh D. Mehta, Daniel Friedman, Adeen Flinker, Edward F. Chang, and Nima Mesgarani | Institutional Affiliations: Columbia University; Mortimer B. Zuckerman Mind Brain Behavior Institute; Hofstra Northwell School of Medicine; The Feinstein Institutes for Medical Research; New York University School of Medicine; University of California San Francisco | Journal: Nature Neuroscience | DOI: https://doi.org/10.1038/s41593-026-02281-5 | Received: October 1, 2025 | Accepted: March 26, 2026