MRI image of the brain (Courtesy of Anna Shvet on pexels.com)

In A Nutshell

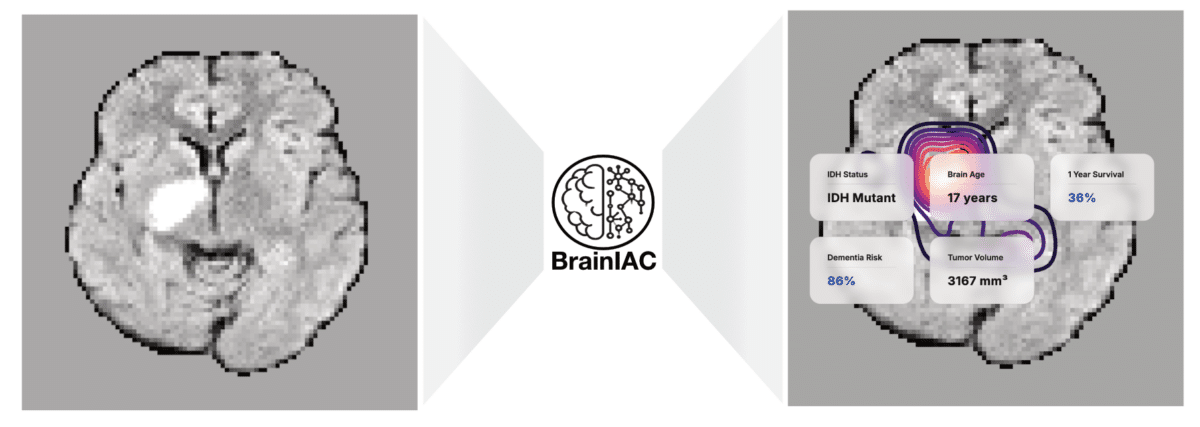

- Harvard researchers developed BrainIAC, a single AI model that can be adapted to analyze brain scans for conditions from Alzheimer’s to cancer, eliminating the need to build separate algorithms for each disease

- The model learned general brain anatomy from 32,000 unlabeled MRI scans, then adapted to new medical tasks with as few as one to five training examples per category

- BrainIAC reached strong performance on seven different challenges including predicting cancer survival, detecting genetic mutations, and spotting early dementia: tasks that are difficult or impossible for doctors using scans alone

- The approach could accelerate AI tool development for rare diseases affecting small patient populations where collecting thousands of labeled examples isn’t feasible

Building an AI system to diagnose brain disease usually means starting from scratch every single time. Want to detect Alzheimer’s? That’s one algorithm. Brain tumors? Build another. Stroke damage? Start over again. Now, Researchers at Harvard Medical School just proved there’s a better way.

They trained a single AI model called BrainIAC that can be adapted to tasks ranging from spotting early dementia to predicting cancer survival. The same system that first learned general brain anatomy from tens of thousands of MRI scans across many conditions can then adapt to rare pediatric brain tumors with only a handful of examples.

The breakthrough solves one of medicine’s biggest AI headaches. Hospitals have millions of brain scans sitting in storage, but most can’t be used to train AI because nobody has the time or money to have doctors label every single image. BrainIAC learned from unlabeled scans first, picking up patterns of brain anatomy on its own.

Teaching AI to Recognize Brain Patterns

Take medical students, for example. They don’t learn radiology by memorizing one disease at a time. They study normal brain anatomy first, then learn to spot when something looks wrong. BrainIAC works the same way.

The model, documented in Nature Neuroscience, analyzed more than 32,000 brain scans from people with ten different conditions plus healthy volunteers. It learned internal representations that capture brain structure, age-related change, stroke injury patterns, and tumor anatomy: all without being told explicit labels.

Once BrainIAC understood general brain anatomy, researchers could adapt it to new tasks with surprisingly little additional training. They tested it on seven completely different medical challenges: identifying scan types, guessing someone’s age from their brain structure, predicting which cancer patients would survive longer, detecting a key genetic mutation in brain tumors, spotting early dementia, estimating how long ago someone had a stroke, and outlining tumor boundaries.

The model handled tasks that are difficult or impossible for doctors to perform from MRI alone. Predicting whether a tumor carried a specific genetic mutation (something that normally requires brain surgery and genetic testing) reached an AUC of about 0.79. That’s a strong result for a diagnosis doctors can’t make just by looking at scans.

Lead researcher Dr. Benjamin Kann found the biggest advantage came with rare diseases where training data is scarce. With just 50 examples, BrainIAC reached an AUC of about 0.68 on mutation prediction. Traditional AI systems trained from scratch barely performed better than random chance.

Learning From Almost Nothing

The research team pushed this to extremes. What if you only had one example of each type of scan? They ran experiments with absurdly small datasets, the kind of limitation researchers face with ultra-rare diseases affecting maybe a few hundred kids worldwide.

With only four training examples total, BrainIAC still learned to classify four different scan types better than random guessing. For glioblastoma, one of the deadliest brain cancers, the model predicted whether patients would survive past one year. Even with only 10% of the training data, it achieved an AUC of about 0.62 for one-year survival prediction. Systems trained from scratch collapsed to barely better than a coin flip.

This matters for a simple reason. Pediatric brain cancers might affect 50 kids per year in the entire country. You’re never going to collect 10,000 labeled training examples. BrainIAC’s approach means researchers could develop AI tools for diseases that affect small numbers of patients, something that’s essentially impossible today.

The model even paid attention to relevant brain regions. When researchers visualized where BrainIAC was looking, it focused on the hippocampus for dementia detection (a brain region that shrinks in Alzheimer’s), white matter regions that change with aging, and tumor cores for cancer predictions. The attention maps aligned with known neuroanatomy, suggesting the model was learning medically meaningful features rather than arbitrary patterns.

Why This Hasn’t Worked Before

Current medical AI systems often fail spectacularly when you take them out of the hospital where they were built. An algorithm developed at Mass General might not work at a hospital in Texas because the MRI scanners are different, the patient population looks different, even the way technicians position patients varies.

Kann’s team tested this by training BrainIAC on scans from multiple institutions, then testing it on completely different hospitals’ data. They also deliberately corrupted images with common technical problems: contrast shifts, blurriness, intensity variations. BrainIAC handled the noise better than alternative models trained from scratch or with narrower pretraining, especially on difficult prediction tasks where other models’ performance fell apart.

What It Can’t Do (Yet)

BrainIAC only works on standard structural MRI scans, the bread-and-butter sequences radiologists look at every day. It can’t handle functional MRI that shows brain activity or specialized scans. More importantly, this is still research. The team analyzed scans collected over many years, but that’s different from prospective testing where the AI runs in real-time as doctors make decisions. Nobody knows yet whether it actually improves patient outcomes, speeds up diagnosis, or changes treatment choices in clinical practice.

The Bigger Picture

Right now, every medical AI project reinvents the wheel. One research group spends two years building a brain tumor detector. Another spends two years on an Alzheimer’s screening tool. Each needs separate funding, data collection, and validation.

Foundation models flip this around. Build one system that understands brain anatomy, then quickly adapt it to whatever medical question you’re asking. Need a tool for a newly discovered disease? Fine-tune the foundation model instead of starting from scratch. Rare pediatric cancer with only 30 cases per year? The foundation gives you a head start.

The approach won’t replace specialized AI systems optimized for single tasks at major medical centers. But it could dramatically lower the barrier to developing AI tools for underserved conditions, rare diseases, and resource-limited settings where collecting massive training datasets isn’t realistic.

Kann’s team report that the model and code are publicly available for research use, meaning other researchers can build on this work. Whether BrainIAC specifically becomes widely used or just proves the concept doesn’t matter as much as demonstrating that one AI system really can be adapted across a wide range of brain imaging tasks, from Alzheimer’s to brain cancer.

Disclaimer: This article is based on peer-reviewed research. The AI model described (BrainIAC) is a research tool and has not been approved for clinical use. The findings represent retrospective analysis of existing brain scans and do not demonstrate improved patient outcomes in prospective clinical settings. Readers should not interpret this research as medical advice. Anyone with health concerns should consult qualified healthcare professionals. The performance metrics reported reflect research conditions and may differ in real-world clinical applications.

Paper Notes

Study Limitations

The research focused exclusively on standard structural MRI sequences and did not include diffusion-weighted imaging, functional MRI, or other specialized contrast types. The model was trained on skull-stripped images, limiting its application to intracranial analysis. While the study included 48,965 scans, representing the largest pretrained brain MRI foundation model to date, incorporation of additional training data may yield further performance improvements. Image registration was not evaluated as a downstream task, as it is typically treated as a preprocessing step rather than a standalone application. Although the model showed strong performance on seven diverse tasks, real-world clinical translation would require prospective validation studies in clinical workflows. No prospective clinical trials have been conducted to demonstrate whether the model improves patient outcomes in practice.

Funding and Disclosures

This study was supported by the National Institutes of Health/National Cancer Institute through grants U54 CA274516 and P50 CA165962. Additional support was provided by the Botha-Chan Low Grade Glioma Consortium, the DMG Precision Medicine Initiative, the ASCO Conquer Cancer Foundation (grant 2022A013157), and the Radiation Oncology Institute (grant ROI2022-9151). The authors declared no competing interests.

Publication Details

Authors: Divyanshu Tak, Biniam A. Garomsa, Anna Zapaishchykova, Tafadzwa L. Chaunzwa, Juan Carlos Climent Pardo, Zezhong Ye, John Zielke, Yashwanth Ravipati, Suraj Pai, Sri Vajapeyam, Maryam Mahootiha, Mitchell Parker, Luke R.G. Pike, Ceilidh Smith, Ariana M. Familiar, Kevin X. Liu, Sanjay Prabhu, Omar Arnaout, Pratiti Bandopadhayay, Ali Nabavizadeh, Sabine Mueller, Hugo J.W.L. Aerts, Raymond Y. Huang, Tina Y. Poussaint, and Benjamin H. Kann | Journal: Nature Neuroscience | Title: A generalizable foundation model for analysis of human brain MRI | DOI: 10.1038/s41593-026-02202-6 | Publication Date: February 5, 2026 | Affiliations: Artificial Intelligence in Medicine Program, Mass General Brigham; Department of Radiation Oncology, Dana-Farber Cancer Institute and Brigham and Women’s Hospital, Harvard Medical School; Memorial Sloan Kettering Cancer Center; Boston Children’s Hospital; University of Pennsylvania; additional affiliated institutions listed in the original publication. | Correspondence: Benjamin H. Kann, M.D., [email protected]