(© Slowlifetrader - stock.adobe.com)

In a Nutshell

- An advanced AI model outperformed physician baselines across six different clinical reasoning experiments, including real emergency room cases drawn from a Boston hospital.

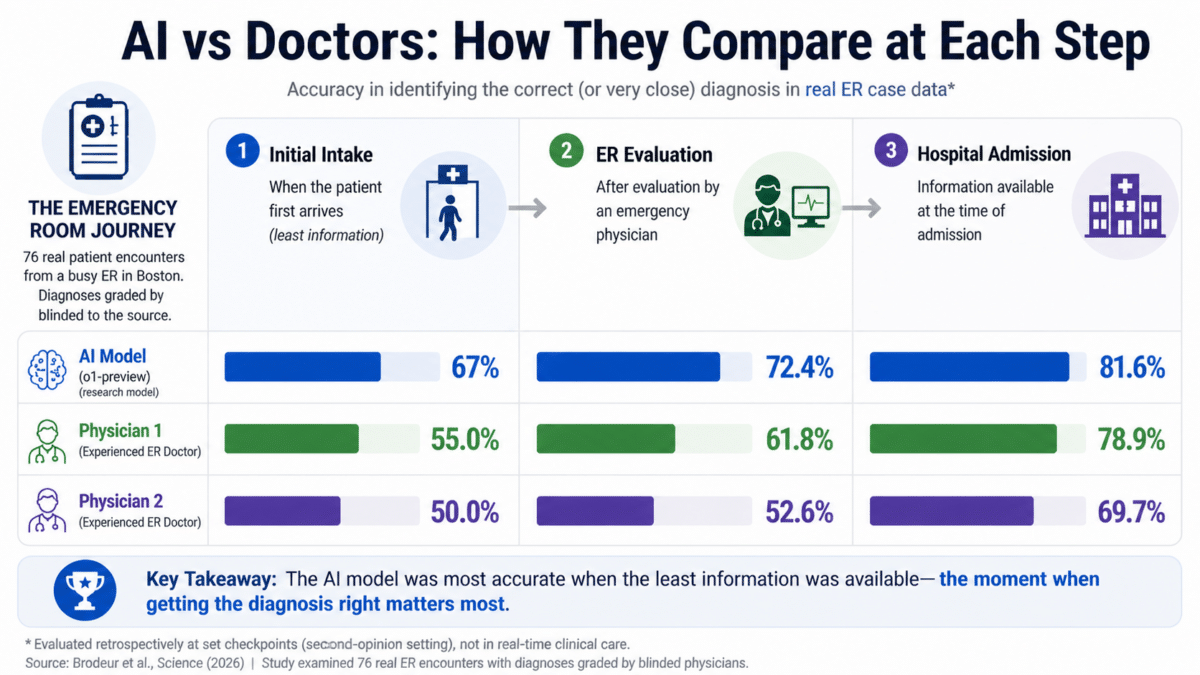

- At the earliest and most critical point of ER care, initial triage, the AI correctly identified the diagnosis or something very close to it about 67% of the time, compared with roughly 55% and 50% for two attending physicians.

- On structured treatment planning cases, the AI scored a median of 89 out of 100, more than doubling the scores of physicians using either AI assistance or conventional resources.

- Researchers caution that the study tested only text-based reasoning and call for real-world clinical trials to determine whether AI tools can translate these results into better patient outcomes.

For more than sixty years, the test for whether a computer could think like a doctor has stayed roughly the same: hand it a tough medical case and see if it can figure out what’s wrong. Generations of software, from primitive programs in the 1950s to rule-based systems in the 1980s, tried and mostly fell short. Now, a landmark study published in Science finds that an advanced AI model has vaulted over that bar, outperforming hundreds of real physicians at diagnosing patients and recommending next steps across multiple experiments, including on messy, real-world cases pulled straight from a busy emergency room.

Led by researchers at Harvard Medical School, Beth Israel Deaconess Medical Center, Stanford University, and other institutions, the study tested a preview version of OpenAI’s o1-series model across six experiments designed to measure the kind of thinking doctors do every day: generating a list of possible diagnoses, choosing the right test to order, estimating how likely a disease is, and deciding on a treatment plan. Across all experiments, the AI generally met or beat the performance of human physicians, sometimes by wide margins.

None of that happened in a vacuum. Diagnostic errors are a persistent and costly problem in American medicine, and the pressure on emergency physicians to make fast, high-stakes decisions with limited information is well-documented. Against that backdrop, an AI model that can reliably keep pace with or exceed trained clinicians carries real weight.

AI Had Its Biggest Edge When Information Was Scarce

Perhaps the most eye-opening result came from the emergency room portion of the study. Rather than relying only on polished textbook-style cases, the researchers pulled 76 real patient encounters from the Beth Israel Deaconess Medical Center emergency department in Boston and had the AI go head-to-head with two attending physicians. Crucially, the physicians grading the results were kept in the dark about which answers came from a human and which came from a machine. The blinding worked well: one evaluator correctly identified the source only 15% of the time, while the other managed just 3%.

Researchers created three snapshots of each patient’s journey through the ER: the initial intake notes, the evaluation by an emergency physician, and the information available at the time of hospital admission. At each checkpoint, the AI and the two attending physicians each produced a list of up to five possible diagnoses, graded by two separate physicians.

At the earliest and most information-scarce point, the AI identified the correct or very close diagnosis about 67% of the time. The two attending physicians hit that mark roughly 55% and 50% of the time, respectively. As more information became available later, everyone improved, but the AI maintained its lead throughout. By the time of hospital admission, the AI was correct in about 82% of cases, compared with roughly 79% and 70% for the two physicians. Its biggest advantage appeared at the exact moment when getting the call right matters most: when a patient first walks through the door.

Doctors Bested on Notoriously Hard Textbook Cases

Much of the study was built around a set of famously difficult diagnostic puzzles published by the New England Journal of Medicine. These cases have been used to test diagnostic tools since the 1950s. After running 143 of them through the AI, the model included the correct diagnosis somewhere in its list of possibilities in about 78% of cases. Its very first guess was correct 52% of the time. When graders also counted diagnoses that were very close or would have been clinically helpful, not just exact matches, the accuracy climbed to nearly 98%.

On a head-to-head comparison using 70 of those cases, the newer o1-series model landed the exact or very close diagnosis in about 89% of cases, compared with roughly 73% for GPT-4 in a previous study.

Beyond naming diseases, the AI proved capable of selecting next steps. When asked to pick the next diagnostic test a doctor should order, it chose correctly in about 88% of cases. In another 11%, its suggestion was judged helpful even if not the exact test used in the actual case. Only about 1.5% of its recommendations were deemed unhelpful.

Researchers also tested whether the AI could demonstrate the kind of structured thinking that medical schools spend years building. Using 20 virtual patient cases from a training program developed by the New England Journal of Medicine, they scored the AI’s written reasoning on a validated 10-point scale. It achieved a perfect score on 78 out of 80 graded responses, well ahead of GPT-4, attending physicians, and doctors in training.

On structured treatment planning vignettes, where the question shifts from “what does the patient have?” to “what should we do about it?”, the AI scored a median of 89 out of 100 points. GPT-4 alone scored a median of 42. Physicians using GPT-4 as a tool scored 41. Physicians using conventional resources scored 34.

How AI Handled Medical Probability, and What It Still Can’t Do

Doctors are expected to think in probabilities, estimating how likely a diagnosis is before and after a test result comes in. Researchers tested the AI on five primary care scenarios previously given to a nationally representative sample of 553 medical practitioners, including resident physicians, attending physicians, nurse practitioners, and physician assistants. Overall, the AI performed similarly to GPT-4, with modest improvements that varied by case. Human clinicians, by contrast, showed far wider variability in their estimates than either AI model. In the cardiac ischemia scenario specifically, the newer AI substantially outperformed both GPT-4 and human clinicians.

The authors are clear that none of this means an AI should replace a doctor. The study tested only text-based reasoning. Real medicine involves hearing the strain in a patient’s voice, watching how someone walks into a room, performing a physical examination, and interpreting imaging studies, none of which were part of this evaluation. The researchers note that current AI models appear more limited when reasoning over non-text inputs, a real constraint given how central physical exams, lab images, and diagnostic scans are to everyday clinical practice.

The cases were also drawn primarily from internal medicine and emergency medicine, leaving open how the AI would perform in surgery or other specialties. And while the emergency room experiment used actual patient data, the task of providing a second opinion at set checkpoints is better understood as a proof of concept than a simulation of how AI would function inside a real clinical workflow.

Still, the trajectory is hard to ignore. From the first diagnostic computer programs of the 1950s to this study’s results, the field has been climbing the same mountain for decades. Researchers argue that AI has now “eclipsed most benchmarks of clinical reasoning” and that the moment calls for rigorous, real-world clinical trials that embed these tools into actual workflows and measure whether they genuinely improve patient outcomes. The gap between what AI can do in a controlled study and what patients experience in an exam room remains wide, but it is closing.

Paper Notes

Limitations

The study tested only a preview version of the o1 model, which has since been supplanted by newer versions like OpenAI’s o3. The experiments covered just six aspects of clinical reasoning out of dozens that have been identified as relevant to patient care. Cases were drawn predominantly from internal medicine and emergency medicine, leaving surgical specialties and other fields largely unexamined. The emergency department experiment, while grounded in real patient data, is best understood as a proof of concept, since decisions in that setting typically center on triage and disposition rather than pure diagnostic accuracy. Several of the benchmarks used relied on cases curated by clinicians or developed for educational purposes, which may overstate AI performance when compared with the messier data of actual clinical workflows. Researchers also did not always find robust improvements in the o1 model compared with previous models, particularly on “cannot-miss” diagnoses in the NEJM Healer cases and on the landmark diagnostic cases.

Funding and Disclosures

Funding came from multiple sources: NIH/NIEHS award R01ES032470, the Harvard Medical School Dean’s Innovation Award for Artificial Intelligence, Macy Foundation awards B25-15 and P25-04, Moore Foundation award 12409, NIH/NIAID 1R01AI17812101, NIH-NCATS UM1TR004921, the Stanford Bio-X Interdisciplinary Initiatives Seed Grants Program, NIH U01 NS134358, and a Stanford RAISE Health Seed Grant 2024. Competing interests include: Adam Rodman is a Visiting Researcher at Google DeepMind; Eric Horvitz is employed by Microsoft; Jonathan Chen is cofounder of Reaction Explorer LLC and a paid medical expert witness; Zahir Kanjee discloses royalties from Oakstone Publishing and Wolters Kluwer; Andrew Olson discloses employment of his spouse by Exact Sciences; Raja-Elie Abdulnour is employed by the Massachusetts Medical Society and has consulted for Lumeris. All other authors declare no competing interests.

Publication Details

Authors: Peter G. Brodeur, Thomas A. Buckley, Zahir Kanjee, Ethan Goh, Evelyn Bin Ling, Priyank Jain, Stephanie Cabral, Raja-Elie Abdulnour, Adrian D. Haimovich, Jason A. Freed, Andrew Olson, Daniel J. Morgan, Jason Hom, Robert Gallo, Liam G. McCoy, Haadi Mombini, Christopher Lucas, Misha Fotoohi, Matthew Gwiazdon, Daniele Restifo, Daniel Restrepo, Eric Horvitz, Jonathan Chen, Arjun K. Manrai, and Adam Rodman.

Journal: Science, Vol. 392, Issue 6797 (April 30, 2026)

Title: “Performance of a large language model on the reasoning tasks of a physician”

DOI: 10.1126/science.adz4433

Submitted: June 2, 2025; Accepted: February 23, 2026