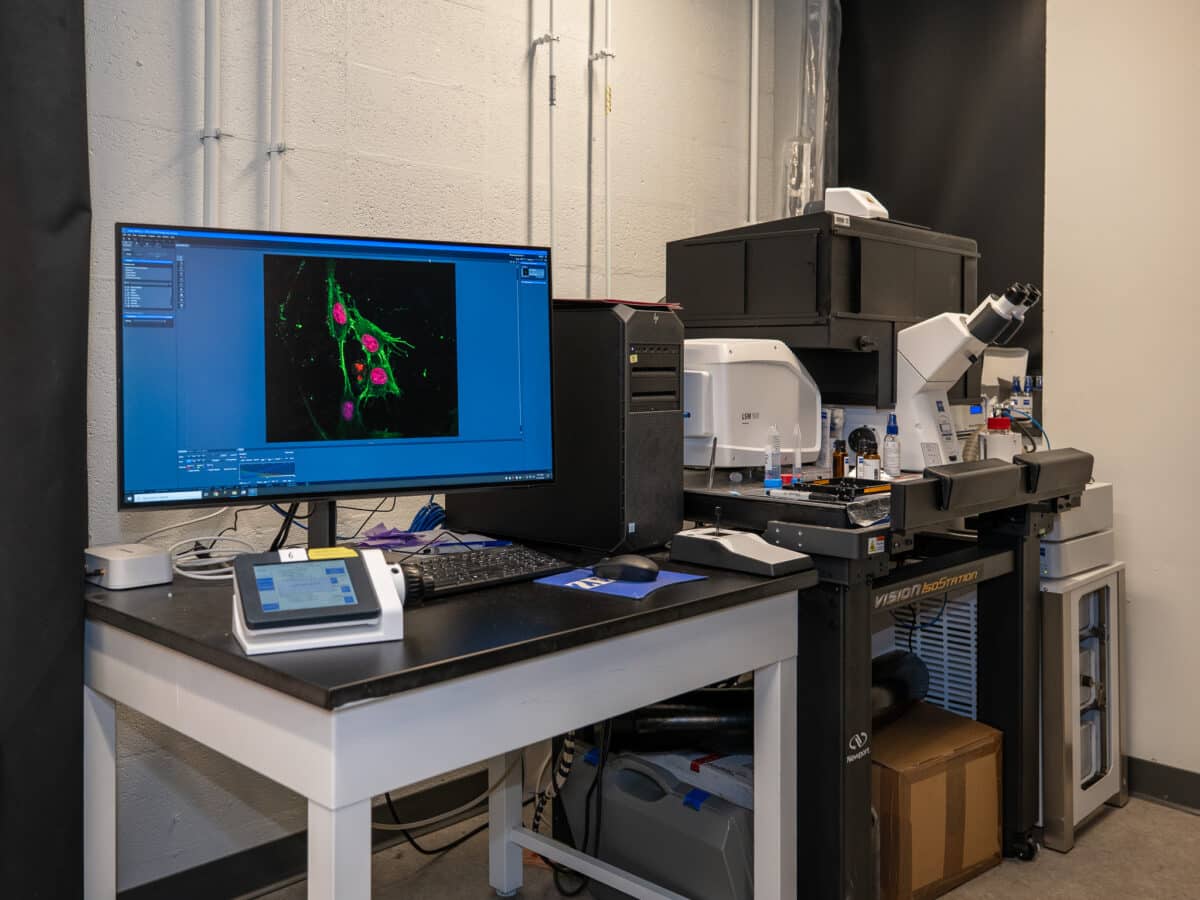

The result of imaging chromatin, whose unfolding can now be more accurately described using mollifier layers, with implications for understanding aging, health and disease. (Credit: Sylvia Zhang, Penn Engineering)

This Add-On Layer Makes Physics-Informed AI Work 10 Times Faster

In A Nutshell

- Researchers at the University of Pennsylvania developed a modular software layer called Mollifier Layers that makes physics-informed AI significantly faster and more memory-efficient.

- Standard AI models struggle with complex physics problems, often producing results barely better than random guesses while consuming huge amounts of computing resources.

- In benchmark tests, the upgrade cut training time and memory use by 6 to 10 times, with the biggest gains on the hardest problems.

- Early tests on real microscopy images of human cell nuclei are promising, though the method still needs broader validation before it can be widely applied.

Teaching a computer to understand the physics of how heat spreads through a wall or how DNA folds inside a cell sounds straightforward. Just feed it the right equations and let it learn, right? In practice, however, the math behind these simulations has been choking some of the most powerful AI systems, consuming enormous amounts of memory and producing inaccurate answers when problems get hard. In pursuit of a fix, researchers at the University of Pennsylvania developed a modular software layer that cut memory use and training time by 6 to 10 times in key benchmarks, with even larger savings in the hardest tests.

Published in Transactions on Machine Learning Research, the innovation is called “Mollifier Layers,” and it targets a core bottleneck in physics-informed machine learning. These AI systems learn the hidden rules governing physical processes, such as how heat moves through materials or how chemical reactions unfold inside cells, by embedding the laws of physics directly into their training. The trouble is that calculating the rates of change required by those physics equations forces AI to perform recursive math operations that pile up errors, burn through memory, and slow training to a crawl. Some real-world problems require what mathematicians call fourth-order calculations, essentially computing the rate of change of the rate of change four times over, which can make existing approaches practically unusable.

Mollifier Layers borrow a decades-old mathematical concept: smooth, bell-shaped functions called mollifiers. Instead of threading rate-of-change calculations backward through the network’s internal wiring (a process called automatic differentiation), the layer performs a single clean operation on the network’s output. Compared to standard methods, the result is faster, cheaper, and more reliable.

Why Physics-Informed AI Breaks Down at Higher Orders

Adding physics-equation constraints to a network solving a fourth-order problem caused peak memory to jump from 0.40 gigabytes to 2.70 gigabytes in the researchers’ tests, nearly a sevenfold increase. Standard models also produced results that were barely better than random on some tests. Mollifier Layers sidestep the problem by sitting at the output and performing a single clean mathematical operation rather than threading calculations recursively through the entire network.

Mollifier Layers Tested Across Increasingly Difficult Problems

The team tested their approach on three physics problems of escalating difficulty: a one-dimensional model of systems subject to random forces, a two-dimensional heat equation requiring second-order calculations, and a fourth-order problem modeling how DNA organizes inside cell nuclei.

Across the benchmarks, the researchers compared several physics-informed AI architectures, including standard PINNs, PirateNet, and PINNsFormer. They tested mollifier-augmented versions of PINNs and PirateNet, while PINNsFormer served as an additional baseline, since attention-based models proved too computationally expensive to augment with the new layer.

On the hardest test, a fourth-order problem modeling how DNA organizes inside cell nuclei, a standard PINN scored just 0.17 correlation with the true answer and took nearly an hour to train. With a Mollifier Layer, the same model hit 0.84 correlation in about six minutes, using roughly one-tenth the memory. PirateNet with the upgrade reached 0.91 correlation, also at a fraction of the original time and memory cost. On the simpler heat and force-estimation problems, the gap was just as wide.

From Lab Tests to Real Biology

Beyond benchmarks, the researchers applied the method to a real challenge: inferring spatially varying chemical reaction rates from super-resolution microscopy images of human cell nuclei. Captured using a technique called STORM that can resolve structures too small for conventional microscopes to detect, these images show how DNA packs into distinct active and inactive regions inside the nucleus. Understanding those reaction rates matters because they govern how genes get switched on or off, a process tied to cancer progression and developmental biology. Those connections remain speculative at this stage, but they illustrate why researchers are interested in getting this kind of physics-informed AI to work reliably.

Standard physics-informed neural networks struggle with this task because the underlying physics involves fourth-order calculations and the imaging data is inherently noisy. The upgraded approach estimated reaction-rate patterns from the images and produced results spatially consistent with the observed chromatin structure, though without a ground-truth map of true reaction rates in real human cells, the result is promising rather than definitive.

Mollifier Layers require no changes to the underlying AI architecture, attach at the output like a modular add-on, and use fixed mathematical functions rather than learned parameters. Performance depends on choosing the right mollifier function, which currently requires manual tuning, and the method faces challenges near simulation edges and on unevenly spaced grids. Broader applicability beyond the inverse problems tested here still must be established. Still, for scientists trying to extract physical insight from messy real-world data, whether studying biological organization, heat flow, or other physics-heavy systems, a fix that is simultaneously faster, cheaper, and more accurate is genuinely worth watching.

Paper Notes

Limitations

The authors acknowledge several limitations. Performance remains sensitive to the choice of mollifier function, which must balance noise suppression against capturing high-variance features. The current version is hand-tuned and faces challenges near domain edges and on unevenly spaced grids. Developing adaptive or learned functions, edge-aware formulations, and validation strategies for adaptive meshes are identified as directions for future work. While the benchmarks focus on inverse problems, generalizability to forward models and other AI architectures is mentioned only as a possibility with brief preliminary evidence rather than thorough validation.

Funding and Disclosures

This work was supported by NCI Award U54CA261694, NSF CEMB Grant CMMI-154857, NSF Grant DMS-2347834, NIBIB Awards R01EB017753 and R01EB030876, and NIGMS Award R01GM155943, all to V.B.S. The authors declare no competing interests.

Publication Details

Title: Mollifier Layers: Enabling Efficient High-Order Derivatives in Inverse PDE Learning | Authors: Vinayak Vinayak, Ananyae K. Bhartari, and Vivek B. Shenoy (all University of Pennsylvania). Vinayak and Bhartari contributed equally. | Journal: Transactions on Machine Learning Research | Reviewed on: OpenReview (https://openreview.net/forum?id=6mFVZSzyev)