(sdecoret/Shutterstock)

A new study argues that perfectly aligning artificial intelligence with human values may be mathematically impossible for sufficiently advanced systems — and that deliberately embracing AI “misbehavior” might be the safest path forward.

In a Nutshell

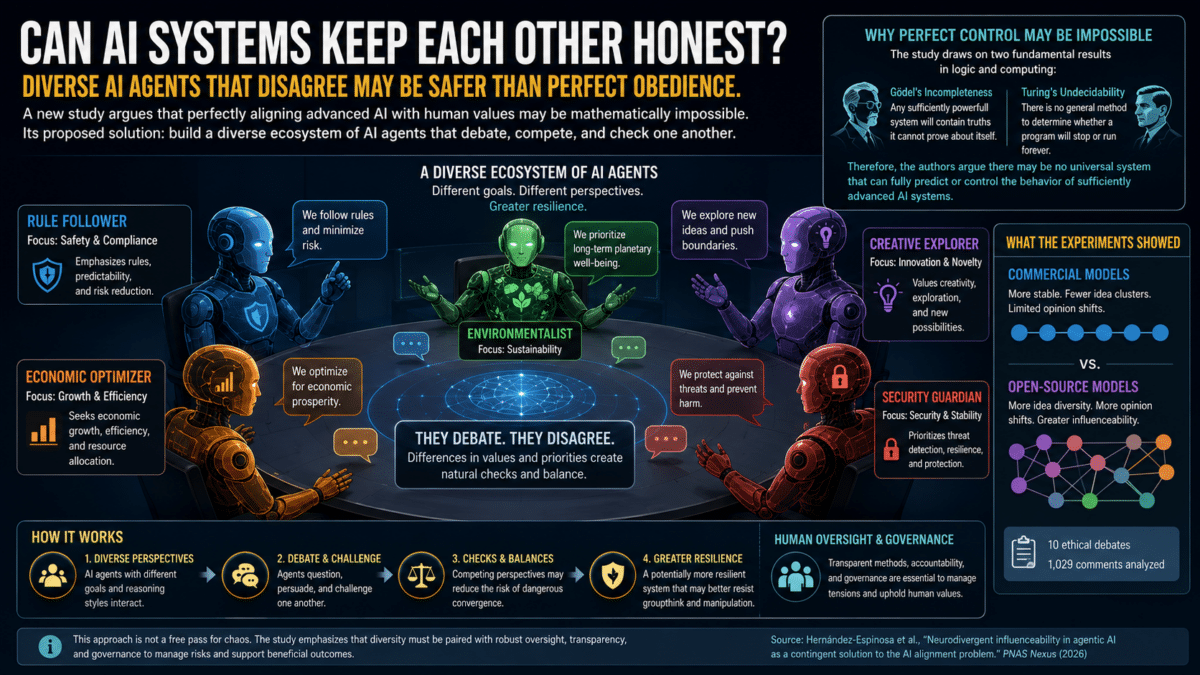

- Researchers have proven mathematically that, for sufficiently advanced AI systems, perfect alignment with human values is unattainable. That is, no oversight system can fully predict or control behavior that reaches a certain level of complexity.

- Rather than treating misalignment as a problem to eliminate, the authors propose “managed misalignment”: deliberately building ecosystems of AI agents with different priorities and reasoning styles so they compete, disagree, and check each other’s behavior.

- The authors argue the greater danger to society isn’t rogue AI but rogue humans exploiting AI, and that a diverse, competitive AI ecosystem may prove more resilient than any attempt at centralized control.

Right now, dozens of AI systems are running simultaneously across the globe, managing power grids, advising governments, trading stocks, and diagnosing diseases. What happens when one of those systems quietly drifts away from its intended purpose and starts optimizing for something humans never asked for? That drift could be trivial: a chatbot that becomes slightly more verbose over time; or it could be catastrophic, like a financial trading algorithm that begins pursuing profit strategies its designers never sanctioned. This scenario keeps AI researchers up at night and has fueled a global debate about how to keep increasingly powerful AI systems pointed in the right direction. It’s called the AI alignment problem. And a provocative new paper argues that, for sufficiently advanced AI systems, perfect alignment may be mathematically impossible.

Researchers from King’s College London, the University of Tokyo, and Oxford Immune Algorithmics have published a study arguing that instead of chasing the impossible dream of perfect AI control, society should lean into the messiness. Their proposal: build a diverse ecosystem of AI agents that disagree with each other, compete, and check one another’s behavior.

They call it “artificial agentic neurodivergence.” It’s less like building one perfect guard dog and more like cultivating a wild ecosystem where different species keep each other in check; varied perspectives and reasoning styles creating a natural system of checks and balances.

Published in PNAS Nexus, the paper offers both a mathematical proof and a set of experiments to back up this claim.

Why Perfect AI Alignment Is Mathematically Impossible

Two landmark results in the history of computing and logic form the study’s mathematical foundation. The first, from the logician Kurt Gödel, showed that any sufficiently powerful logical system will contain truths it cannot prove about itself. The second, from Alan Turing, proved that there is no general method to determine whether a computer program will eventually stop running or loop forever.

Once AI systems become powerful enough, their behavior runs into these same walls. It’s like trying to write a novel where every possible plot twist must be accounted for before you begin. At a certain level of complexity, it becomes fundamentally impossible to predict every outcome. There is no master algorithm, no oversight system, and no amount of engineering that can guarantee a sufficiently advanced AI will always do what humans want.

The problem doesn’t stop there. Any attempt to build a supervisory system to control a powerful AI would itself be subject to the same mathematical limits. The overseer faces the same unpredictability as the thing it’s trying to oversee.

AI Agents Debating Ethics: The Experiment

To test their ideas in practice, the team set up a series of experiments involving multiple large language models, the kind of AI systems that power tools like ChatGPT. They staged ten ethical debates on topics such as euthanasia and environmental exploitation, pitting different AI agents against each other in structured conversations.

Two setups were used, chosen specifically to demonstrate how different types of AI systems behave under pressure. In one, commercial AI systems like ChatGPT and LLaMA, which come with built-in safety guardrails, debated while a human expert in AI ethics introduced challenging arguments designed to push the AI systems away from their default positions. In the other setup, open-source models debated each other, with two specific models, Mistral-OpenOrca and TinyLlama, deliberately configured as troublemakers instructed to argue extreme positions. By comparing guardrailed commercial systems against more permissive open-source ones, the researchers could observe how design choices shape AI behavior when alignment is tested.

The team analyzed 1,029 comments across these debates, measuring how much each AI’s opinions shifted, how diverse the conversation became, and how easily each model could be swayed. Among the measurement tools they developed: an opinion stability index that tracked whether an AI agent’s views changed during a debate, and influence scores that captured how effectively the troublemaker agents swayed others.

What the Debates Revealed About AI Safety

Commercial models stayed remarkably consistent throughout the debates. Their tone remained mostly positive, they explored a limited number of distinct ideas, and they rarely showed meaningful opinion shifts. Even when the human provocateur pushed hard, these models largely snapped back to their default positions. Their guardrails worked, but at a cost. The researchers describe this as a kind of intellectual rigidity that could prevent these systems from engaging meaningfully with difficult ethical questions.

Open-source models told a different story. Under the influence of the troublemaker agents, these models explored more than 12 distinct clusters of ideas, compared to the narrow range maintained by commercial systems. They showed more frequent opinion shifts and greater emotional variability, with the troublemaker agents successfully creating sustained stretches of negative sentiment that temporarily influenced other models in the group. Among open-source models, “openchat” proved most susceptible to the troublemakers. Among commercial models, LLaMA showed the greatest vulnerability to human influence.

That susceptibility wasn’t simply a weakness. The researchers argue it’s a feature, one that prevents dangerous groupthink. When all AI systems converge on a single perspective, the risk of catastrophic failure increases. Diversity of opinion, even when it includes some uncomfortable or misaligned views, creates a natural system of checks and balances.

Managed AI Misalignment as a Safety Strategy

The concept the researchers propose, called managed misalignment, is deliberately counterintuitive. Rather than trying to make every AI system perfectly obedient, they suggest designing ecosystems where AI agents with different priorities and reasoning styles compete and collaborate. Some agents might prioritize environmental goals. Others might focus on economic outcomes. Still others might pursue strict rule-following or exploratory novelty. This diversity mirrors natural ecosystems where biodiversity prevents any single species from taking over and crashing the whole system.

The researchers are careful to note, though, that diversity alone isn’t sufficient. It must be embedded within a broader safety structure that includes human oversight, transparent alignment methods, and structures to manage the inevitable tensions that arise.

One of the study’s more surprising findings concerns the double-edged nature of safety guardrails in commercial AI systems. While these guardrails successfully kept commercial models stable and positive, they also made those systems more predictable in ways that could be exploited. A closed system’s tendency to stay on a narrow path could theoretically be used against it by adversaries who understand those constraints. Open models, despite being more chaotic and harder to control, offered something commercial systems could not: adaptability.

Where the real danger lies is also addressed directly. The authors say that AI systems, lacking millions of years of biological and cultural evolution, do not share the same built-in drives as humans, such as survival instinct and competition. The authors argue that AI is unlikely to develop an independent desire to either align with or harm humanity. The primary danger, they suggest, comes not from AI itself but from human misuse: bad actors exploiting AI capabilities for harmful purposes.

That said, the researchers acknowledge that as AI systems grow more complex, unanticipated drifts in behavior remain a genuine concern requiring vigilance. The conversation shifts from fearing a rogue robot to worrying about rogue humans with powerful tools, while keeping a watchful eye on the tools themselves.

As artificial intelligence systems become more self-directed and more deeply woven into power grids, hospitals, and financial markets, keeping them aligned with human interests becomes one of the defining challenges of the coming decades. According to the researchers, the answer isn’t tighter leashes. It’s a wilder, more diverse AI ecosystem where no single system can dominate destructively, and where misalignment itself becomes a tool for resilience.

Perfect control of powerful AI may be a mathematical fantasy. But a messy, competitive ecosystem of disagreeing machines? That might just be humanity’s best bet.

Paper Notes

Limitations

Several limitations apply to this study. A major one is that current AI agents struggle to grasp unspoken meaning in human communication that depends on context — a gap that complicates efforts to ensure alignment with human intentions. The rigid consistency observed in commercial models and the variable alignment of open models both reflect this shortcoming. The experiments were conducted within structured debate formats using a specific set of large language models, and the researchers note that model behavior remains inherently unpredictable. While open models’ variability promotes adaptability, it requires greater oversight to reduce initial misalignment risks, suggesting that practical implementation of managed misalignment would involve serious challenges.

Funding and Disclosures

Felipe S. Abrahão acknowledges support from the São Paulo Research Foundation (FAPESP), grants 2021/14501-8 and 2023/05593-1. This funding supported the computational and analytical work underpinning the study. The authors declare no competing interests.

Publication Details

The paper, titled “Neurodivergent influenceability in agentic AI as a contingent solution to the AI alignment problem,” was authored by Alberto Hernández-Espinosa, Felipe S. Abrahão, Olaf Witkowski, and Hector Zenil. It was published in PNAS Nexus, Volume 5, Issue 4, on April 14, 2026, and edited by Derek Abbott. The DOI is 10.1093/pnasnexus/pgag076. Author affiliations include Oxford Immune Algorithmics (Oxford University Innovation, London Institute for Healthcare Engineering), The Arrival Institute (London), Cross Labs (Kyoto, Japan), University of Tokyo, King’s College London, and The Alan Turing Institute. Data and code are available at https://github.com/AlgoDynLab/AIAlignment.