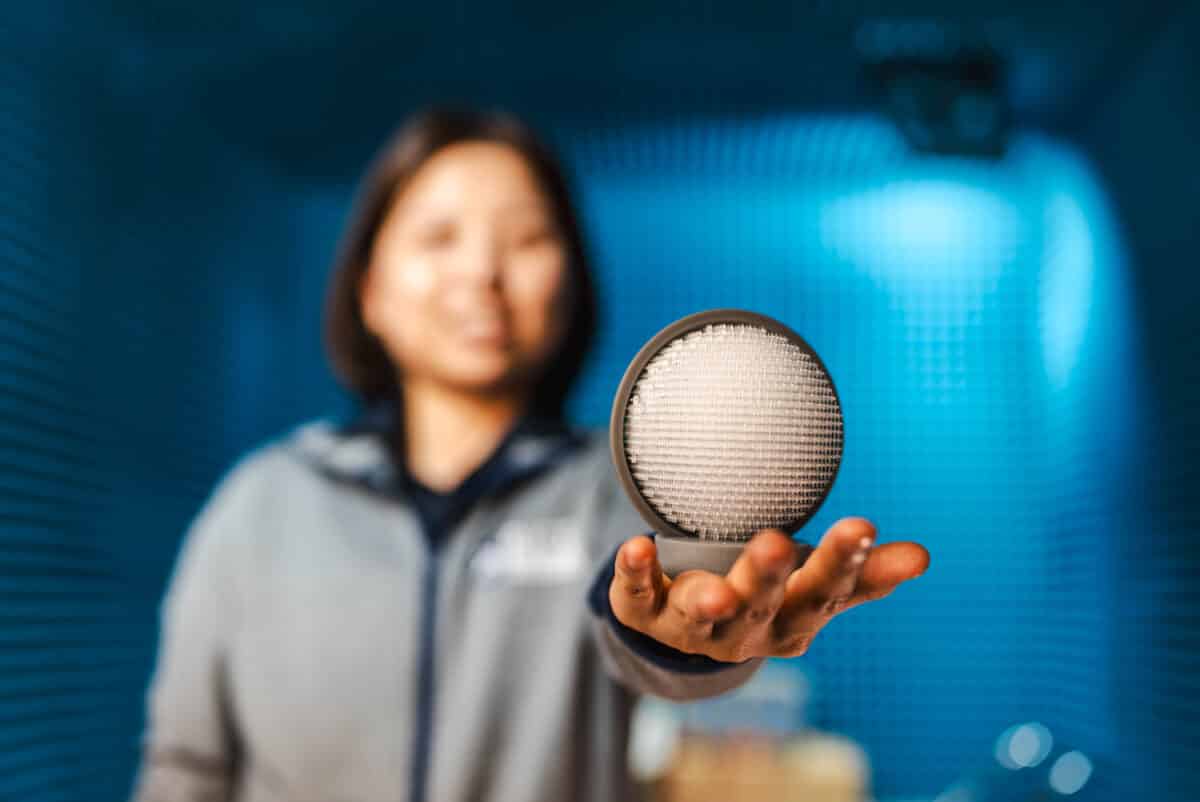

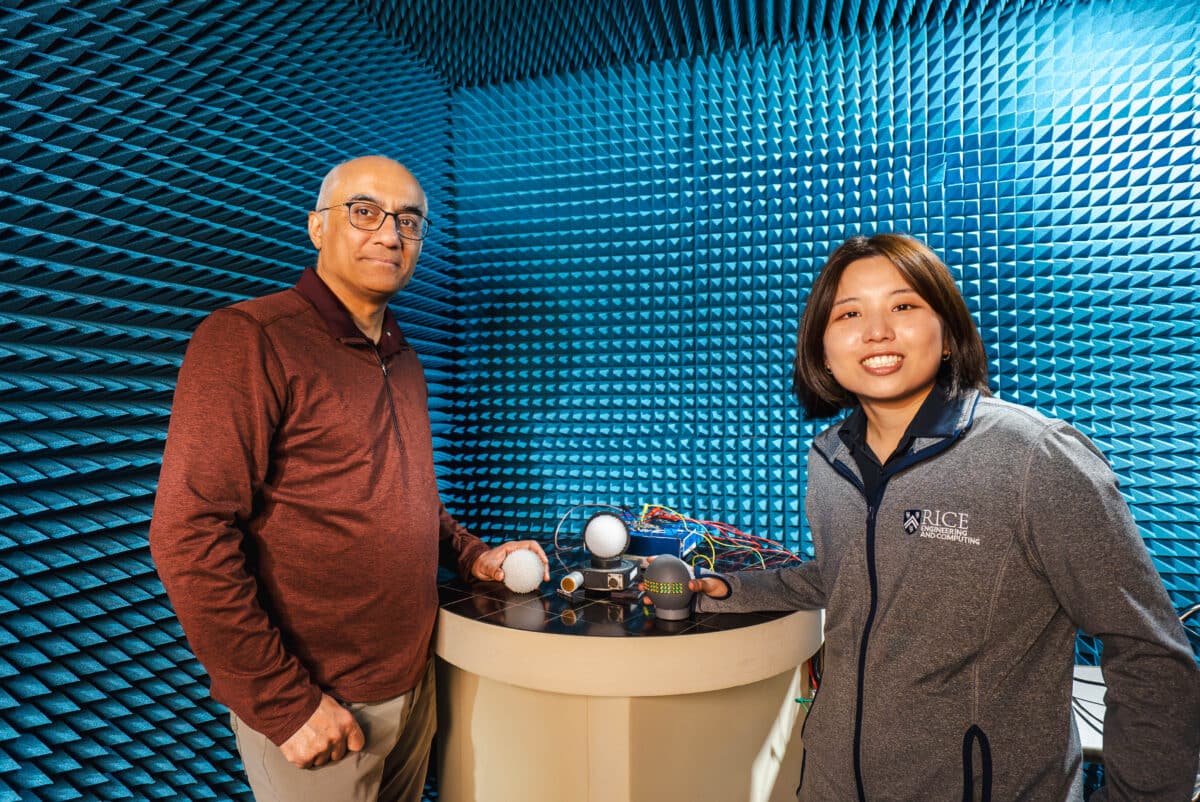

Kun Woo Cho holding an EyeDAR sensor prototype in the anechoic chamber where it is being tested. (Credit: Jared Jones/Rice University)

In A Nutshell

- Self-driving cars rely on radar to navigate bad weather, but millimeter-wave radar produces dangerously sparse maps because most signals bounce away before returning to the vehicle.

- Engineers built EyeDAR, a roadside sensor inspired by the human eye, that captures those lost signals and beams the location data back to passing cars.

- The core component, a 3D-printed Luneburg lens, costs about $7 to fabricate and runs on milliwatts of power, far cheaper and more efficient than comparable commercial systems.

- Lab tests confirmed the device can locate the source of scattered radar signals to within roughly two degrees, with further improvements planned for future versions.

Billions of dollars and more than a decade of engineering have gone into making autonomous vehicles safe, yet a simple rainstorm can still bring one to a halt. Tesla’s Autopilot has logged crashes in foggy conditions. Waymo vehicles have been pulled over during heavy downpours. For all the sophistication packed into a modern self-driving car, bad weather remains an unsolved problem, and the sensors are a big reason why.

Cameras and LiDAR, the laser-based technology that builds detailed 3D maps of road environments, both depend on light. Fog scatters it. Rain streaks it. The industry’s fallback is millimeter-wave radar, a technology that cuts through weather that defeats cameras and lasers. The problem is it produces images that are sparse, gappy, often missing objects that are right there.

A team of engineers from Rice University and the University at Buffalo think they have a fix, and the core component costs just $7 to fabricate. Their device, called EyeDAR, was presented at HotMobile ’26, the 27th International Workshop on Mobile Computing Systems and Applications. It mounts roadside like a traffic sign, captures radar signals that passing vehicles can never recover on their own, and beams the location data back to the car. In an industry where a single high-end sensor can cost thousands of dollars, that price tag is worth a long look.

Why Self-Driving Car Radar Misses So Much

Radar works by sending out a signal and measuring what bounces back. At millimeter wavelengths, signals hit most surfaces the way light hits a mirror, glancing off at an angle rather than returning toward the source. As the paper states, “most reflected signals do not return to the radar, leaving vast area invisible.” Objects sitting just off the direct line between a vehicle and its sensor can vanish from the picture entirely. On top of that, false detections from multiple signal bounces, sidelobes from strong reflectors, and ground clutter pile on top of the missing real data.

The result is a sensor that performs reliably in any weather but cannot, on its own, build the kind of dense, reliable map that safe autonomous navigation requires. That gap is what EyeDAR is designed to close.

A $7 Radar Tag That Thinks Like a Human Eye

EyeDAR’s key component is a Luneburg lens, a sphere of 3D-printed resin whose internal structure bends incoming radar signals and focuses them onto a curved surface lined with small antennas. The concept maps directly onto how a human eye works. A biological lens concentrates light from different directions onto specific spots on the retina, where photoreceptors translate position into visual information. EyeDAR’s lens does the same with radar waves, focusing them so that surrounding antennas can pinpoint the direction each signal came from.

That approach sidesteps one of radar’s traditional computing burdens. Conventional direction-finding algorithms become dramatically more demanding as more signals are added. EyeDAR’s lens-based method scales linearly instead, a meaningful advantage for a small, low-power roadside device. The lens also passively amplifies weak incoming signals by more than 15 decibels, eliminating the need for a powered amplifier entirely. Once the tag identifies where a radar signal came from, a small onboard microcontroller encodes that directional data and returns it to the vehicle by modulating the vehicle’s own radar signal rather than generating a new transmission. The whole system runs on milliwatts of power.

The 3D-printed lens in the prototype cost approximately $7 to fabricate. That context matters: commercial radar systems capable of reaching the fine angular resolution EyeDAR is targeting for future versions, below two degrees, can require around 20 watts of power and bulky hardware. EyeDAR’s current prototype runs on a fraction of that power, at a fraction of the cost.

What the Lab Tests Actually Showed

Researchers evaluated the prototype using a commercial 24 GHz automotive radar in a controlled lab setting. To measure direction-finding accuracy, they swept an incoming radar beam across the device in five-degree increments, spanning 60 degrees left to 60 degrees right, then compared EyeDAR’s reported angle against the actual source.

Two methods were tested. A basic approach identifying the antenna with the strongest signal produced a mean error of 0.68 degrees. A refined method computing a weighted average across the three strongest antenna readings cut that average error to nearly zero, with variation tightening significantly. That precision reflects the limits of the current antenna spacing and corresponds to an effective angular resolution of 5.4 degrees.

Coverage tests rotated the device through its full range of orientations at 24 GHz, and signal gain held steady regardless of the incoming angle. The device performs consistently no matter which direction a radar beam arrives from.

On the communication side, EyeDAR transmitted its directional data back to the vehicle’s radar using a simple binary encoding scheme, with a 15-decibel contrast between the signal’s on and off states, enough for reliable detection without disrupting the vehicle’s existing radar operation.

What a Seven-Dollar Lens Actually Changes

Roadside sensing infrastructure has been discussed as a complement to onboard vehicle sensors for years. The problem was always economics. At thousands of dollars per sensing unit, equipping even a modest stretch of road was out of reach for most cities and transportation agencies.

At roughly $7 for the fabricated lens, that math starts to look different, though a fully deployed roadside unit would also include antennas, electronics, and an enclosure. EyeDAR’s current prototype captures directional data but not distance, so building a complete 3D picture of a road environment will require range measurement, something the team has flagged as the next development target. Future versions are also planned to include denser antennas capable of sub-2-degree resolution and software that merges data from multiple roadside tags with a vehicle’s onboard radar into a unified map.

Those are tomorrow’s problems. What the prototype proves, under lab conditions, is that a device built around a $7 lens can accurately locate scattered radar signals, run on milliwatts in any weather, and relay useful information to a passing car. For an industry that has long answered hard problems with expensive hardware, that is a genuinely different kind of starting point.

Paper Notes

Limitations

EyeDAR’s current prototype captures the angular direction of arriving radar signals but not the distance to the reflecting object, meaning full 3D point cloud reconstruction remains future work. All testing took place in a controlled laboratory setting with a single commercial 24 GHz radar, not in real-world driving conditions or adverse weather. Angular resolution is presently limited to 5.4 degrees by the current patch antenna spacing, with denser metamaterial antennas proposed as a path to sub-2-degree performance. Measured bandwidth is constrained by cable and antenna losses rather than the Luneburg lens itself. Scenarios involving multiple vehicle radars were not tested.

Funding and Disclosures

Kun Woo Cho and Ashutosh Sabharwal were partially supported by the National Science Foundation Award Number 2346550. No other funding sources or conflicts of interest were disclosed.

Publication Details

The paper “EyeDAR: A Low-Power mmWave Tag that Senses and Communicates 3D Point Clouds to Enhance Radar Perception” was authored by Kun Woo Cho (Rice University, Houston), Yaxiong Xie (University at Buffalo, SUNY, Buffalo), and Ashutosh Sabharwal (Rice University, Houston). It was presented at HotMobile ’26: The 27th International Workshop on Mobile Computing Systems and Applications, held February 25–26, 2026, in Atlanta, Georgia, and published March 2, 2026. Published in HotMobile ’26: Proceedings of the 27th International Workshop on Mobile Computing Systems and Applications. DOI: https://doi.org/10.1145/3789514.3792041. ISBN: 9798400724718. Published by ACM, New York, NY.