(© Stepan Popov - stock.adobe.com)

An AI Scanned Medical Records From 140,000 Kids and Found Early Warning Signs of ADHD

In A Nutshell

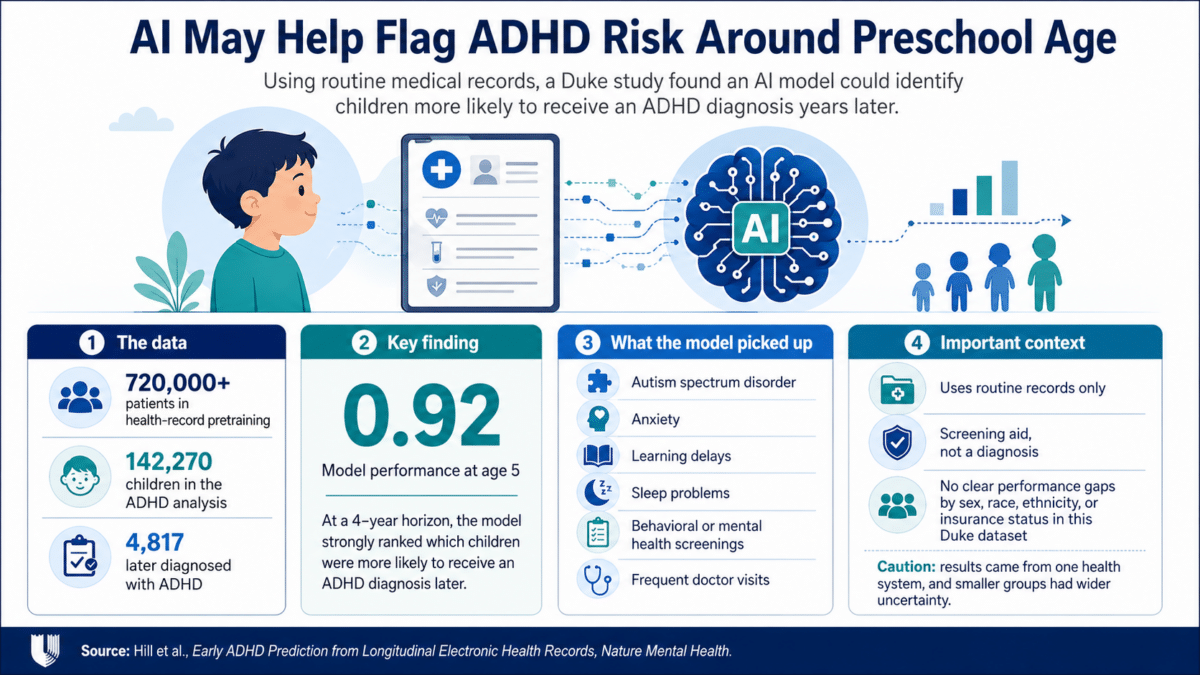

- Duke University researchers trained an AI on the medical records of more than 140,000 children and found it could identify kids at higher risk for an ADHD diagnosis as early as age five.

- The model scored 0.92 out of 1.0 on a standard performance benchmark at age five, looking four years ahead, and kept improving as children got older and more data accumulated.

- Warning signs the AI detected included prior diagnoses of anxiety, autism, learning delays, and sleep problems, as well as behavioral screenings that never resulted in a formal diagnosis.

- No significant performance gaps were found across racial, ethnic, or insurance groups in this dataset, though the researchers caution that broader real-world testing is still needed.

Most kids with ADHD don’t get diagnosed until they’re well into elementary school, sometimes later. By then, years of struggle often have already piled up. New research from Duke University suggests that gap could someday narrow with help from an AI tool that reads a child’s routine medical records and flags children who may be more likely to receive an ADHD diagnosis years down the road.

ADHD is the most common neurodevelopmental disorder in American children, affecting roughly one in ten. Despite that, diagnosis comes late for many kids. Research has put the average age of first diagnosis anywhere from six to eighteen years, and delays run even longer for children who are Black, Hispanic, or on Medicaid. Earlier diagnosis matters: studies have consistently linked it to better outcomes in school, friendships, and long-term well-being.

Rather than waiting for a parent or teacher to raise a red flag, the Duke model works in the background, scanning a child’s existing medical records for early warning signs. No extra tests to run the model. No new questionnaire just to generate the alert. Just the data that’s already there.

AI ADHD Prediction Showed Strong Performance in Children as Young as Five

To build the model, the team drew on electronic health records from the Duke University Health System, covering more than 720,000 patients collected over roughly a decade. For the ADHD-specific analysis, researchers focused on 142,270 children, of whom 4,817 were eventually diagnosed with ADHD.

Rather than simply predicting whether a child would ever receive a diagnosis, the model was designed to estimate when that diagnosis was likely to arrive. That matters because ADHD gets identified at wildly different ages, and a tool that can’t account for timing is far less useful to a clinician trying to decide who needs a closer look now.

By age five, the model was already performing well, scoring 0.92 on a scale from 0 to 1, where anything above 0.5 is better than chance and 1.0 is perfect. That does not mean the tool would correctly flag nine out of ten children; it means the model ranked future ADHD diagnoses well within this dataset. That score reflected a four-year lookout window, meaning the model was assessing each child’s likelihood of diagnosis by around age nine. Performance kept improving as children got older and more medical data accumulated. Results were published in Nature Mental Health.

What Kids’ Doctor Visits Reveal About ADHD Risk

What the model flagged as warning signs will look familiar to anyone who knows how ADHD tends to show up in kids. Children whose records included diagnoses of autism spectrum disorder, anxiety, learning delays, or sleep problems were scored as higher risk. So were kids whose records showed behavioral or mental health screenings, even ones that hadn’t led to any formal diagnosis at the time. In those cases, the model was likely picking up on a clinician quietly noting that something was off, even without enough evidence to act on it yet. It’s a reminder that the medical record captures more than just confirmed diagnoses; it also reflects a provider’s hunches, and those hunches, it turns out, carry real predictive weight.

Frequent doctor visits also factored in. Children with ADHD tend to use healthcare services more heavily before they are ever diagnosed, a pattern the model picked up on. Notably, the factors with the strongest predictive pull were tied to specific medical events, not just how often a child came in, which means the model wasn’t simply rewarding families with more access to care.

AI ADHD Prediction Showed No Clear Performance Gaps by Race, Ethnicity, Sex, or Insurance Status

One of the harder questions to answer about any diagnostic tool is whether it works equally well across different groups. A model that performs well for white, privately insured children but misses the mark for Black children on Medicaid would simply bake the healthcare system’s existing disparities into an algorithm. One encouraging finding from this Duke dataset: the researchers did not find statistically significant performance gaps by sex, race, ethnicity, or insurance type. But that does not prove the model would work equally well everywhere.

Estimates for smaller demographic groups, particularly Hispanic children, carried wide uncertainty, and the entire dataset came from a single health system in Durham, North Carolina. Whether the model holds up at hospitals in other regions, with different patient populations and documentation practices, remains an open question.

For now, the researchers envision this as a screening tool, something running quietly in the background of a child’s chart that nudges a provider toward taking a closer look. It is not meant to hand down a diagnosis on its own. But for a condition where early help can genuinely reshape a child’s trajectory, getting that nudge around preschool age is a very different outcome than waiting until third grade.

Disclaimer: This article is based on a published research study and is intended for informational purposes only. It does not constitute medical advice, diagnosis, or treatment. The AI model described is a research tool that has not been approved for clinical use. Parents or caregivers with concerns about a child’s development or behavior should consult a qualified healthcare provider.

Paper Notes

Limitations

All data came from the Duke University Health System, which may limit how well results generalize to other institutions with different patient populations or documentation practices. ADHD cases were identified using billing codes rather than formal clinical evaluation, which introduces some potential for misclassification. Some children left the dataset before a diagnosis could be recorded, and while the researchers used statistical methods to account for this, it remains a source of uncertainty. The model also does not fully account for the tendency of children with ADHD to seek more medical care before diagnosis, a factor that could influence predictions. The researchers note that this tool is intended solely to support provider assessment or complement standard diagnostic procedures, not to replace them.

Funding and Disclosures

This work was supported by the National Institute of Mental Health (K01-MH127309) and the National Center for Advancing Translational Sciences, National Institutes of Health (UL1 TR002553). Additional support came from the Duke AI Health Data Science Fellowship Program and Duke University Health System. Co-author Geraldine Dawson serves on the Scientific Advisory Board of Tris Pharma, Bristol Myers Squibb, YAMO Pharmaceuticals, and the Nonverbal Learning Disability Project; consults for Guidepoint Global; receives book royalties from Guilford Press, Oxford University Press, and Springer Nature; and has developed technology licensed to Apple, Inc., from which both she and Duke University have benefited financially. All other authors declared no competing interests.

Publication Details

Title: Early ADHD Prediction from Longitudinal Electronic Health Records Authors: Elliot D. Hill, De Rong Loh, Naomi O. Davis, Benjamin A. Goldstein, Geraldine Dawson, Matthew Engelhard Journal: Nature Mental Health Affiliations: Department of Biostatistics and Bioinformatics, Duke University School of Medicine; Duke AI Health; Duke NUS Medical School; Department of Psychiatry and Behavioral Sciences, Duke University School of Medicine; Duke Center for Autism and Brain Development; Duke Institute for Brain Sciences DOI: https://doi.org/10.1038/s44220-026-00628-2