The AI is not intended to replace radiologists, but it can help them decide when a bronchoscopy is warranted. (Credit: Gumpanat on Shutterstock)

In A Nutshell

- Many airway foreign bodies don’t show on X-rays, so cases get missed.

- On chest CT, an AI model doubled recall versus expert radiologists.

- The tool highlights suspicious spots so clinicians can choose bronchoscopy.

- It supports experts, aiming to reduce misses without adding heavy workflow.

Ever had a persistent, nagging cough that just wouldn’t go away? You may have had a small piece of food stuck in your airway. It may sound like a rare occurrence, but plenty of people deal with this issue, as these objects are essentially invisible on a standard X-ray. Now, relevant research suggests AI can help catch these tricky cases earlier.

Published in Digital Medicine, a research team in Wuhan, China, trained a system to spot subtle signs of a radiolucent foreign body on routine chest CT scans. In a head-to-head test, the AI found about twice as many true cases as experienced radiologists, while still keeping solid precision. That’s a big difference. Missing a foreign body means weeks of symptoms and repeated misdiagnoses.

Why ‘Invisible’ Objects Get Missed

Foreign body aspiration happens when something you swallow goes down the “wrong pipe” and lodges in your lower airway. Dense items like metal show up clearly on imaging. Common food fragments and plant material often do not. Doctors rely on symptoms, history, and CT scans, yet radiolucent objects can hide in plain sight.

Prior clinical reviews have found that a large share of adult airway foreign bodies are invisible to X-rays, that many patients are misdiagnosed at first, and that the illness can drag on for months before the real problem is identified. That delay is rough: cough, chest discomfort, repeated infections, and time away from work or daily life.

What The Team Built

The researchers combined two steps:

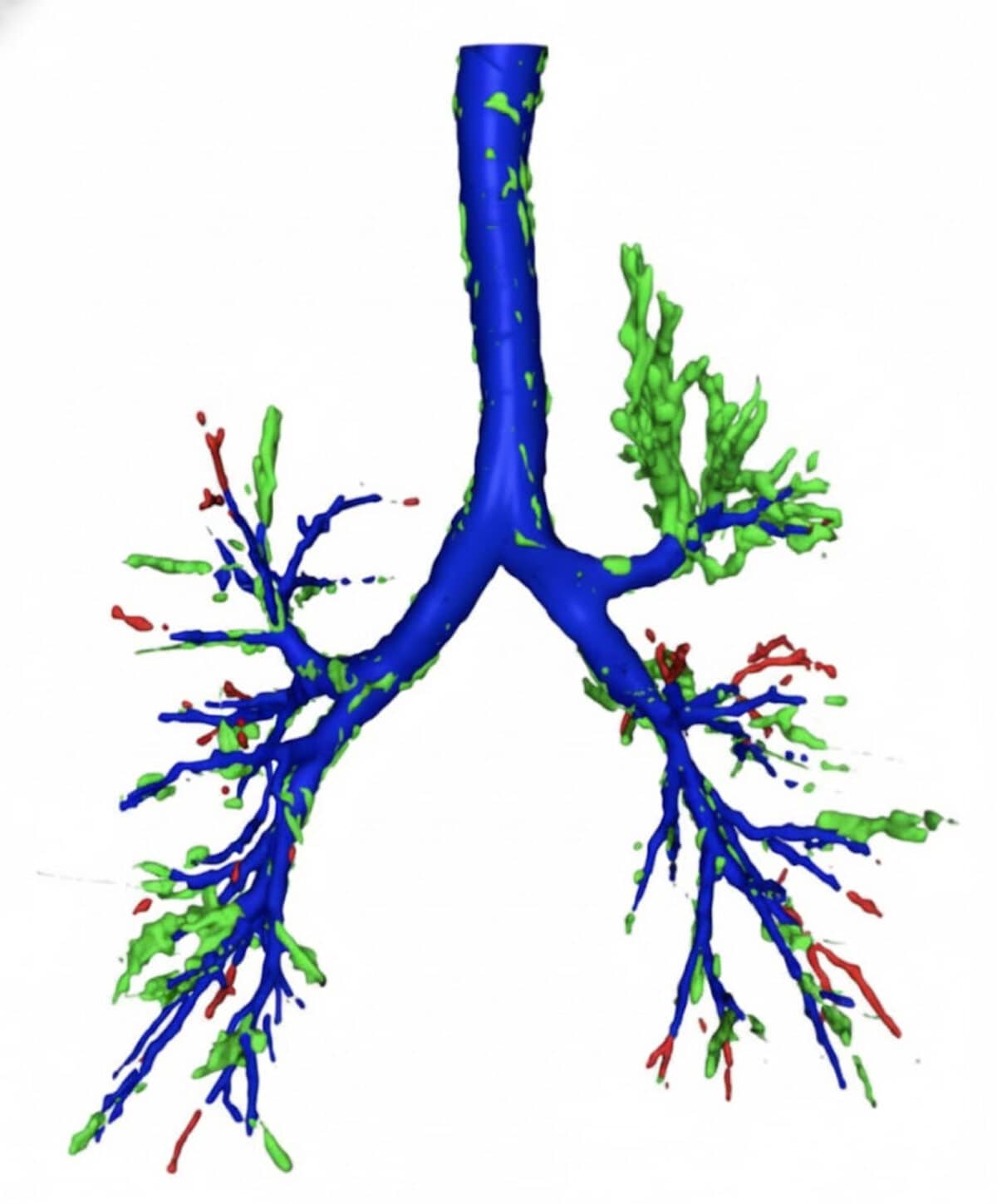

- Map the airways: a tool called MedpSeg draws a detailed 3D map of the bronchial tree from the CT. Think of it like tracing a city’s roads before looking for traffic jams.

- Take many “snapshots”: the system then captures 12 different views of that 3D map and feeds them to a compact image classifier (ResNet-18). Looking from several angles helps the model pick up small blockages that could be easy to miss when scrolling through slices one by one.

All of this runs on standard CT data. No special scanner protocol is required. After a quick preprocessing step to keep image quality consistent, the software segments the airway, generates the 12 views, and makes a yes/no call: likely foreign body vs no foreign body.

AI vs. Radiologists: By The Numbers

In an independent test at a separate hospital, the AI and three board-certified thoracic radiologists read the same 70 cases without seeing bronchoscopy results. The AI showed higher recall: it correctly identified about 71% of true foreign bodies, compared with about 36% for the human readers. The AI’s overall balance of correctness—the F1 score—was also higher. Precision told a different story. The radiologists did not call any false positives, while the AI kept precision in the high-70s. In plain terms, the humans were very cautious and missed more true cases, and the AI caught many more but was willing to raise a few extra flags.

That is exactly what you want from a second reader. You would rather get a careful nudge to take a closer look than send someone home with an object still lodged in the airway.

How Artificial Intelligence Could Be Used In Clinics

Today, if an X-ray does not give answers, doctors look to a CT scan. The challenge is that radiolucent objects blend in. The AI is not meant to replace expertise. It is designed as a support tool that highlights suspicious spots so a clinician can decide whether a bronchoscopy, the definitive look with a camera, is worthwhile. Earlier bronchoscopy can shorten the long, frustrating path many patients travel: cough, antibiotics, repeat visits, and only then the true cause.

Since the system analyzes standard CTs, it could fit into existing workflows. For example, run in the background, flag a case, and let a radiologist or pulmonologist make the final call. This is most helpful in settings without a specialized chest radiologist or when a second opinion would be useful.

Across three different datasets used for development and testing, the model’s accuracy hovered around 90% or better. The independent test (the toughest one) matters most for real-world trust. There, the AI’s recall advantage and higher F1 score compared with experts suggest it can reduce missed cases. Precision was a bit lower than the radiologists’ perfect score, yet still strong. In practical terms, a few more false alarms are the price of catching more real problems, and a scoped exam can settle the question safely.

What’s Next?

Every study has limits, and this one was no different. To start, the data came from three hospitals in one city. Testing across many regions and scanner types would have resulted in a more universally applicable dataset. The study was retrospective, which is common early on, but future work should be prospective and multi-centered. The system also reads images alone. Adding basic clinical clues—like sudden choking, voice changes, or how long the cough has lasted—might make it even smarter. Finally, CT scans use radiation. That is acceptable for diagnosis, yet it is not a screening tool, especially for children.

Young children are more likely to put objects in their mouths. Many older adults have trouble swallowing. Both groups are at higher risk for aspiration and delayed diagnosis. Hospitals that do not have a dedicated thoracic radiologist could also benefit from an extra set of (digital) eyes. Even large centers may want a second reader for tricky cases or after-hours coverage.

The Bottom Line For Patients And Families

No one should have to wait months to figure out a nagging cough is being caused by a stuck bone fragment. This study showed an AI approach that doubled the catch rate compared with routine expert reads in a blinded test via everyday CT scans. To be clear, doctors still make the final call. The aim here is faster answers and fewer misses.

Disclaimer: This article is for general information only. It does not replace advice from your own clinician, who can review your symptoms, imaging, and options.

Paper Notes

Limitations

This retrospective study involved a relatively limited sample size from hospitals within a single geographic region (Wuhan, China), which may affect generalizability. CT imaging’s radiation exposure limits its use for screening or serial follow-up, particularly in pediatric populations. Radiolucent foreign bodies represent a minority class in clinical datasets, complicating model optimization. The model demonstrated higher recall than expert radiologists but slightly lower precision, potentially resulting in more false-positive cases. The system currently relies solely on imaging features without incorporating clinical metadata such as symptom duration or aspiration history.

Funding and Disclosures

WW was supported by the National Key Research and Development Program of China (NO.2022YFC2303800). XL was supported by the Beijing Medical and Health Public Welfare Foundation (YWJKJJHKYJJ-KXCRHX004). JX was funded by the Wuhan Central Hospital’s Institutional Discipline Fund (2021XK104). HN was supported by the National Natural Science Foundation of China (82370031). YH received funding from the National Natural Science Foundation of China (62073149) and the Wuhan Municipal Health Commission (WX23A81). YW was supported by the UK Medical Research Council (MR/S025480/1). ZC and ZT were supported by the China Scholarship Council (202308060201; 202308060211). The authors declare no competing interests.

Publication Details

Liu, X., Chen, Z., Tang, Z., Yang, X., Jiang, Y., Zheng, D., Jiang, F., Ni, F., Geng, S., Qian, Q., Hao, Y., Xu, J., Wang, Y., Zhu, M., Wang, X., Ewing, R.M., Belkhatir, Z., Zhang, G., Nie, H., Hu, Y., Wang, W., & Wang, Y. (2025). “Automated detection of radiolucent foreign body aspiration on chest CT using deep learning,” published November 10, 2025 in npj Digital Medicine, 8(647). DOI:10.1038/s41746-025-02097-w