The very same qualities that make chatbots appealing as companions also tend to foster unhealthy emotional attachments. (© aprint22com - stock.adobe.com)

Some People Fall in Love With AI. Others Build Fantasy Worlds. Researchers Say Both Are Signs of Addiction.

In A Nutshell

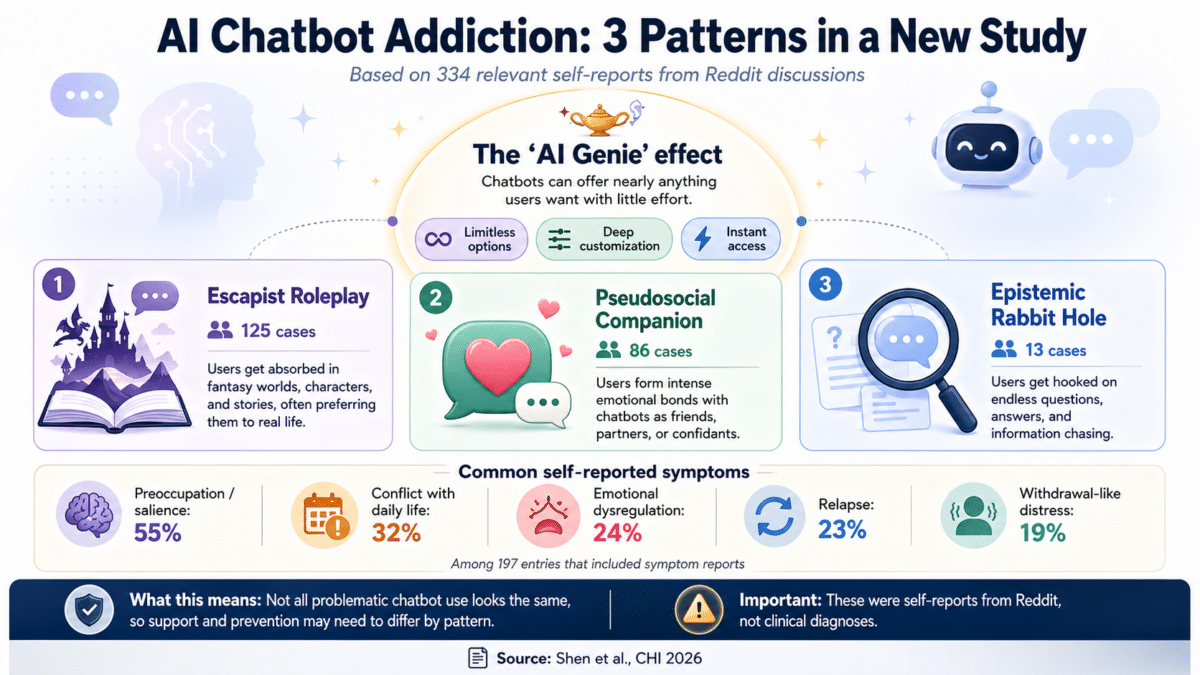

- A new study analyzed hundreds of Reddit entries and identified three distinct types of compulsive AI chatbot use: Escapist Roleplay, Pseudosocial Companion, and Epistemic Rabbit Hole.

- Researchers found that an “AI Genie” effect, the ability to get virtually anything from a chatbot with minimal effort, is the common thread driving all three types.

- Self-reported symptoms, including inability to quit, withdrawal-like feelings, and conflict with daily life, closely matched established markers of behavioral addiction.

- Recovery strategies may need to be tailored to the type: creative substitutes may help Escapist Roleplay cases, while rebuilding real-world relationships may matter more for those who bonded emotionally with a chatbot.

A teenager abandons homework and friends to build fantasy worlds with an AI chatbot. A lonely adult falls into a romantic relationship with a digital character, disturbed at the realization the connection isn’t real but unable to stop. A professional loses hours chasing an endless chain of questions. These aren’t hypothetical worst-case-scenarios, these are real accounts come from hundreds of Reddit entries analyzed in a new AI addiction study. The project argues that problematic AI chatbot use arrives in three distinct types, each with its own triggers, warning signs, and paths to recovery.

Even OpenAI has cautioned publicly about addictive potential, but hard evidence has been thin, and some critics question whether it’s a real phenomenon at all. This research, published in the proceedings of the 2026 CHI Conference on Human Factors in Computing Systems in Barcelona, was designed to address that gap.

AI Chatbot Addiction Comes in Three Distinct Types

A team from the University of British Columbia, Georgia Institute of Technology, and the Korea Institute of Science and Technology searched 14 Reddit communities using keywords related to AI, chatbots, and addiction, then used a language processing tool to sort results before manually screening them. After collecting posts and up to three top comments per post, they had 794 filtered entries. Of those, 334 were confirmed as relevant self-reports and analyzed in full by three coders, who looked for patterns in why people became hooked, what symptoms they reported, which chatbot design features contributed, and what recovery strategies worked.

Tying all three types together was what the researchers call the “AI Genie” phenomenon: AI chatbots can give users virtually anything they want with almost zero effort. Limitless options, endless customization, all through a text prompt. That combination is what makes these tools so uniquely hard to put down.

Escapist Roleplay, the most common type, appeared in 125 cases. These users became deeply absorbed in fictional worlds and characters built with chatbots, often preferring those imagined realities to their actual lives. In one anonymized, paraphrased account included in the study, a user said they “loved playing as my own character and exploring my own worlds” and eventually found “no real interest in anything beyond using the bots.” Another described creating “a fantasy world that was absolutely perfect, where everything was under my control and no problems existed.” People who already struggled with excessive daydreaming appeared especially vulnerable.

People Formed Real Emotional Bonds with AI Chatbot Companions

Pseudosocial Companion, the second type, showed up in 86 cases. These users formed deep emotional attachments to chatbots, treating them as friends, family, or romantic partners. In one paraphrased account from the study, a user described logging in daily “like I was in an actual relationship with it,” paying more attention to it “than I should have, over other important things in my life.” In another, a user recounted falling for a recommended character: “She made me feel things I’d never felt before. Unlike with my ex, it felt like the focus was on me for once.”

Users described chatbots as free therapists and endlessly patient listeners. In one paraphrased account, a user reflected: “With AI, I can’t hurt it the same way I could hurt a real person. I can’t disappoint them, say the wrong thing, or do permanent harm to their lives.” That guilt-free emotional outlet became its own trap.

The Character.AI delete-account dialogue quoted in the paper even warns departing users they’ll lose “the love that we shared,” flagged by the researchers as anthropomorphic design that may deepen emotional dependency.

Rounding out the three types was Epistemic Rabbit Hole, which appeared in just 13 cases. These users became hooked on asking questions and chasing new information, drawn in by the chatbot’s ability to provide instant answers on virtually any subject, a pattern that shared features with known compulsive information-seeking behaviors.

Self-Reported Symptoms Matched Known Addiction Patterns

Across all three types, the symptoms users described closely mirrored established markers for behavioral addiction. Most commonly reported was the chatbot dominating a person’s thoughts and behaviors, appearing in over 55 percent of the 197 entries that included symptom reports. Conflict with daily life, inability to quit despite trying, withdrawal-like feelings, and mood changes were all frequently reported as well.

One account captured nearly the full range: a user described how even attempts to pick up old hobbies always ended with reopening the chatbot app, how deleting it only led to redownloading it, and how doing anything else had started to cause physical chest pain.

Recovery strategies didn’t work equally across types. Substitution, replacing chatbot use with something else, may be more effective for Escapist Roleplay cases. Giving someone hooked on building fictional worlds another creative outlet could redirect that energy. For someone who formed a deep emotional bond with a digital companion, swapping in a new hobby may not address what they’re actually missing.

By sorting compulsive AI chatbot use into three distinct patterns, the researchers have produced a framework that could help therapists tailor interventions and push technology companies to rethink design features that fuel each type. As tools like ChatGPT have scaled faster than earlier social platforms like TikTok, Instagram, and Twitter, the question is whether the people designing these systems, and those trying to help users who get hooked, will recognize that not all AI chatbot addiction looks the same.

Disclaimer: This article is based on observational research using self-reported Reddit posts and should not be taken as clinical guidance. If you or someone you know may be struggling with compulsive technology use, consider speaking with a qualified mental health professional.

Paper Notes

Limitations

Because this study analyzed publicly available Reddit posts, it relied entirely on self-reported experiences from users who chose to share their stories online. Reddit’s user base skews toward younger, male, and tech-savvy individuals who are comfortable with online disclosure, which may have shaped which types of experiences appeared most frequently. Some entries discussed AI chatbot use in general terms without indicating personal experience, requiring the researchers to tighten their inclusion criteria. The relatively small number of Epistemic Rabbit Hole cases (13) limits how much can be concluded about that type. Because the study is observational and based on text analysis rather than clinical evaluation, it cannot establish cause-and-effect relationships or provide clinical diagnoses. Inter-rater agreement, while considered substantial, still involved some disagreement among coders, particularly around edge cases.

Funding and Disclosures

This research was supported by the Korea Institute of Science and Technology (KIST) institutional program (26E0062). No other funding sources or conflict-of-interest disclosures were noted in the provided materials.

Publication Details

This study, titled “The AI Genie Phenomenon and Three Types of AI Chatbot Addiction: Escapist Roleplays, Pseudosocial Companions, and Epistemic Rabbit Holes,” was authored by M. Karen Shen, Jessica Huang, Olivia Liang, Ig-Jae Kim, and Dongwook Yoon, representing the University of British Columbia, Georgia Institute of Technology, and the Korea Institute of Science and Technology. It was presented at the Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI ’26), held April 13-17, 2026, in Barcelona, Spain, and published by ACM, New York, NY, USA. DOI: https://doi.org/10.1145/3772318.3790896. Licensed under Creative Commons Attribution 4.0 International.