(© areebarbar - stock.adobe.com)

EDINBURGH, Scotland — In 1932, while the rest of the world was grappling with the Great Depression, Scotland was busy giving intelligence tests to nearly every 11-year-old in the country. Little did those children know they were kickstarting one of the most illuminating studies ever conducted on how our brains age – or that their test scores would still be making scientific waves nearly a century later.

The Lothian Birth Cohorts study, led by researchers at the University of Edinburgh, has followed hundreds of people born in 1921 and 1936, tracking their cognitive abilities from age 11 into their 70s, 80s, and 90s. After 25 years of research, the scientists have unveiled some surprising discoveries about aging minds, including the fact that about half of our intelligence in old age is determined by how bright we were as children.

Think of it like a cognitive savings account – we start with a certain balance in childhood, and while life experiences can grow or diminish that initial deposit, our early cognitive capabilities have an outsized influence on our mental acuity decades later. The study found that someone who scored well on intelligence tests at age 11 was likely to perform well on similar tests in their 70s and beyond.

But what about the other half of the equation? The researchers discovered that various factors influence how well we maintain our mental edge as we age. Some are within our control, like staying physically active and socially engaged, while others aren’t, such as our genetic makeup. However, the effects of any single factor tend to be small – there’s no magic bullet for keeping our brains young.

The study’s setup was remarkably comprehensive. In the original 1932 and 1947 testing days, almost every 11-year-old child in Scotland (over 87,000 in 1932 and 70,000 in 1947) took the same intelligence test. Decades later, researchers tracked down hundreds of these individuals living in the Edinburgh area and convinced them to participate in detailed follow-up studies.

The participants, now in their later years, underwent regular testing every few years. They completed cognitive assessments, physical examinations, and brain scans. They provided blood samples for genetic analysis and other biological markers. Some even agreed to donate their brains after death for further research.

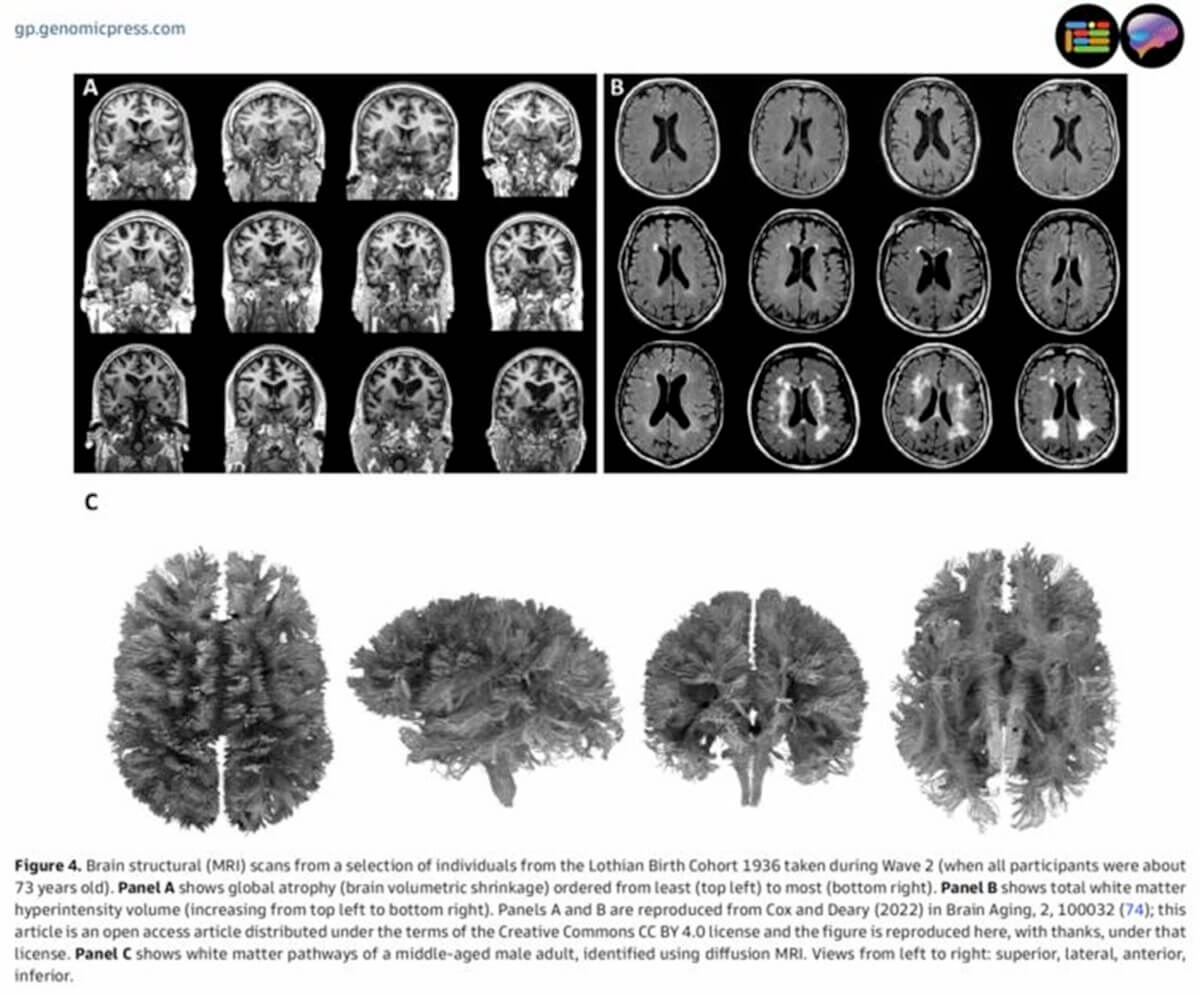

One of the study’s most striking findings was the dramatic variation in how people’s brains age. Brain scans of participants at age 73 revealed that some had brains that looked decades younger than others of the exact same age. This variation helped explain why some people maintain their mental sharpness while others experience more significant cognitive decline.

“What’s particularly fascinating is that even after seven decades, we found correlations of about 0.7 between childhood and older-age cognitive scores,” explains study co-author Ian Deary, a professor at Edinburgh, in a statement. “This means that just under half of the variance in intelligence in older age was already present at age 11.”

The research also challenged some common assumptions. For instance, many factors that seem to affect cognitive ability in old age – like physical fitness, social engagement, and inflammation levels – turned out to be partially explained by childhood intelligence. In other words, smarter kids were more likely to maintain healthy lifestyles and engage in mentally stimulating activities throughout their lives.

But it’s not all predetermined by childhood ability. The researchers identified several factors that appear to help maintain cognitive function, including education, physical activity, and not smoking. However, each factor’s individual effect tends to be modest – suggesting that the best approach to cognitive aging is to make multiple small positive changes rather than seeking a single solution.

The study has also contributed to our understanding of how genes influence cognitive aging. While genetic factors play a role, their effects are complex and often tiny. One exception is the APOE e4 gene variant, which was found to be associated with lower cognitive performance in old age but, interestingly, showed no effect on childhood cognitive ability.

The researchers made another fascinating discovery about DNA methylation – chemical modifications to our DNA that can change with age. They found that these age-related DNA changes could predict how long people would live, offering a new way to measure biological aging at the molecular level.

Perhaps most encouragingly, the study showed that cognitive decline isn’t inevitable or uniform. While some participants experienced significant decreases in mental ability, others maintained sharp minds well into their 80s and 90s. This suggests that while we can’t completely prevent cognitive aging, we may be able to influence its trajectory through lifestyle choices and environmental factors.

From test papers to brain scans, from childhood arithmetic to twilight years’ wisdom, the Lothian Birth Cohorts study has shown us that cognitive aging isn’t just about preserving what we have – it’s about understanding where we started and making the most of every step along the way.

Paper Summary

Methodology

The study began with historical intelligence test data from the Scottish Mental Surveys of 1932 and 1947, which tested almost all 11-year-old children in Scotland. Researchers later recruited 550 people born in 1921 and 1091 born in 1936 who had taken these original tests.

Participants underwent regular testing every few years, including cognitive assessments, physical examinations, brain imaging, blood tests, and various other measurements. The testing was comprehensive, examining everything from grip strength to DNA methylation patterns. The study design allowed researchers to track changes in cognitive ability and various health markers over time while controlling for childhood intelligence.

Key Results

Key findings included: approximately 50% of cognitive ability in older age is explained by childhood intelligence; brain aging varies dramatically between individuals of the same age; genetic factors influence cognitive aging but most genetic effects are very small; the APOE e4 gene affects cognitive ability in old age but not in childhood; DNA methylation patterns can predict longevity; and multiple lifestyle factors each have small but cumulative effects on cognitive aging.

Study Limitations

The study primarily focused on participants from the Edinburgh area of Scotland, potentially limiting its generalizability to other populations. There was also inevitable participant attrition over the decades, with healthier individuals more likely to continue participating. The study lacked data from participants’ middle years (between age 11 and older age), though researchers attempted to fill this gap through retrospective reporting and other techniques.

Discussion & Takeaways

The research suggests that cognitive aging is influenced by both early-life factors and ongoing lifestyle choices, but no single factor has a large effect. This supports a “marginal gains” approach to healthy aging, where multiple small positive changes may accumulate to provide meaningful benefits. The study emphasizes that cognitive decline isn’t inevitable and varies significantly between individuals, suggesting opportunities for intervention to promote healthier brain aging.

Funding & Disclosures

The research has been funded by various organizations over its 25-year span, including Age UK, the UK Research and Innovation bodies, BBSRC, and MRC. The current work is supported by the National Institutes of Health, BBSRC, ESRC, MRC, Milton Damerel Trust, and the University of Edinburgh. The authors declared no conflicts of interest.

I qualified for membership in MENSA at age 13 after taking an IQ test and scoring 160 over five decades ago. Some parochial school classmates were envious and beat me up regularly, calling me dumb. I kept at my studies and since it was mere months from graduation, I never saw those tormentors that I went to school with after that. Nor was I impressed with others in MENSA and ended my membership a year later, going on to study medicine in a foreign country (yes, I had to learn a new language) because I was not a preferred minority applicant and become a hard working physician for nearly 4 decades. I’m retired and widowed now, taking care of a severe autistic adult child full time. Such are the rewards for a good life. I believe a lot of childhood ‘intelligence’ has been lost due to the dumbing down of school curricula, excessive screen time whether TV, computer or cellphone, not enough time playing outdoors and being in nature, such as it may be in cities, lack of committed parental involvement very likely due to overwork or single parenthood via divorce or not having been married in the first place and the toxic smorgasbord being passed off as food in America and other places loaded with artificial colorings, additives, ‘enhancers,’ preservatives, sugar, fat, salt and empty calories devoid of any nutritional value.

I agree completely…food is medicine OR poison.

I think it was already well accepted that intelligence was a rather persistent characteristic of a person that under normal circumstances would not radically change with age. (Obviously, would can lose mental abilities due to brain injury and disease; it’s probably much rarer for intelligence to increase significantly over a person’s lifetime.)