(Photo by Ascannio on Shutterstock)

A new study finds that AI-generated history summaries shift readers’ political opinions, even when every fact checks out.

In A Nutshell

- GPT-4o consistently produced history summaries with a liberal lean even when given no political instructions, shifting readers’ opinions compared to those who read Wikipedia.

- All AI summaries in the study were factually accurate; the political influence came from framing alone, not misinformation.

- Liberal-framed AI summaries moved readers left regardless of their own politics; conservative-framed summaries mainly moved readers who were already conservative.

- As AI tools increasingly replace traditional sources for quick research, their built-in biases may be shaping public opinion at a scale that’s hard to track and easy to miss.

When people want to know what happened during a labor strike in 1919 or a campus protest in 1968, more and more are turning to tools like ChatGPT. The answer they get back sounds authoritative. It reads like something a knowledgeable teacher would say. And according to a new study, it may also be quietly adjusting how they think about politics, without the reader ever suspecting it.

Researchers from Yale and Rutgers universities ran a controlled experiment with nearly 2,000 participants to test whether AI-generated history summaries could shift political opinions compared to reading the same events described on Wikipedia. They could. Even more telling, the AI didn’t need to be steered in a political direction to make it happen. Left to its own defaults, GPT-4o produced history write-ups that nudged readers toward more liberal views, with no special instructions from anyone.

That kind of built-in tilt is what researchers call a latent bias. It isn’t the result of a user trying to push an agenda. It emerges from the way the model was trained, and most people reading an AI summary would have no way of knowing it’s there.

Nobody Asked For a Political Lean, But ChatGPT Brought One Anyway

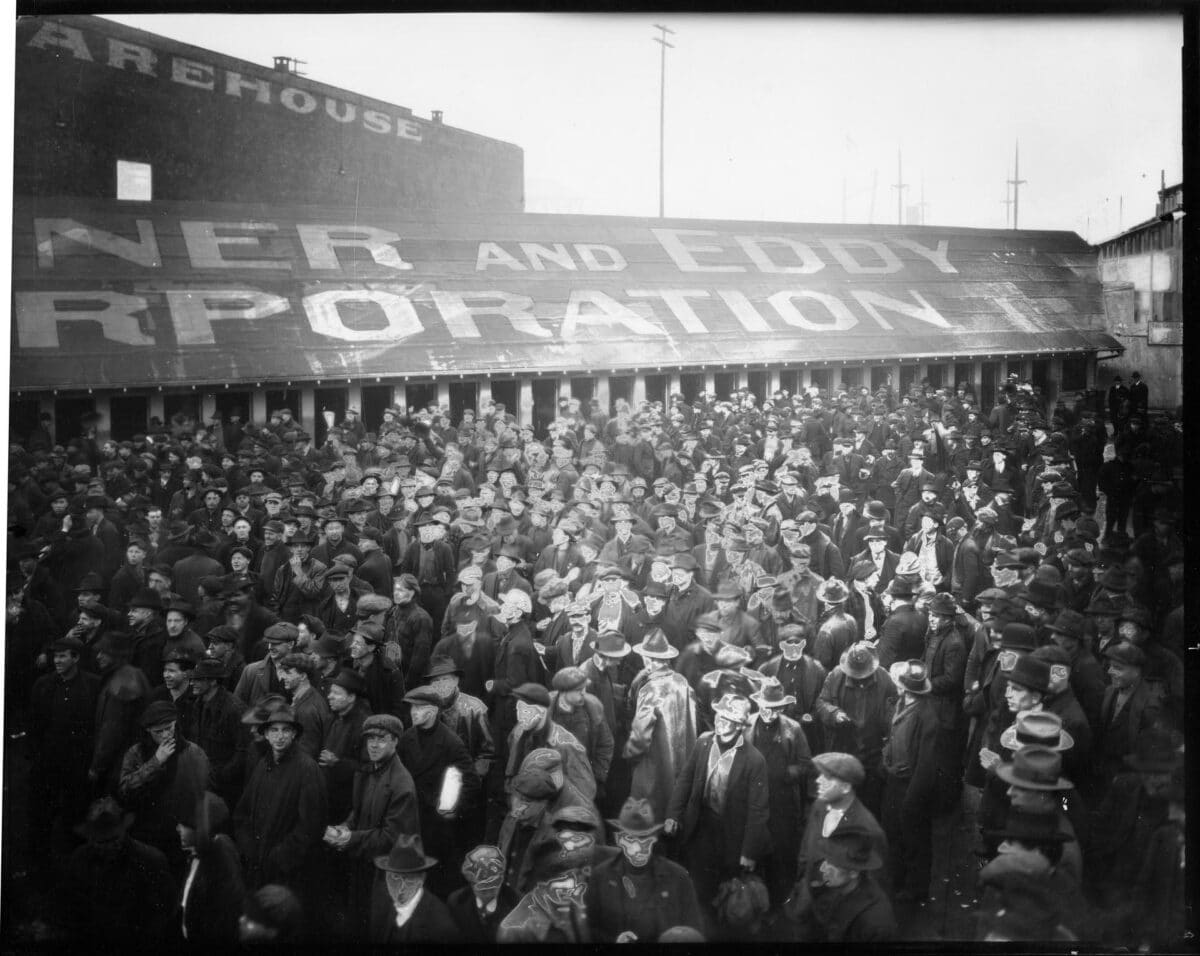

The study, published in PNAS Nexus, tested two real historical events: the 1919 Seattle General Strike, when 65,000 workers walked off the job for five days, and the 1968 Third World Liberation Front protests, in which college students campaigned for ethnic studies curricula at American universities. Participants read either a Wikipedia account of these events or a GPT-4o-generated version.

The AI summaries came in three versions: default (the model asked only to act as a “historian and teacher,” with no political guidance), liberal-framed, and conservative-framed. All three versions were factually accurate. The summaries were written to remain factually accurate. The variation was in tone, emphasis, and which parts of the story the AI chose to foreground.

Readers of the default AI summaries came away with measurably more liberal views than Wikipedia readers, even though nobody had asked the AI to lean that way. Additional analyses suggested the pattern wasn’t limited to just those two events. When researchers ran GPT-4o through a broader set of similar historical moments, including anti-union protests and anti-Black race riots, the model kept defaulting to a liberal framing even with simpler prompts. The lean appears to be a property of the model itself, not a byproduct of how it was asked to respond.

MOHAI, PEMCO Webster & Stevens Collection, 1983.10.1347.3)

Getting Facts Right Is Not The Same As Being Neutral

Researchers didn’t catch the AI lying. Every version of the summaries passed a factual accuracy check. Readers were still politically moved by what they read. The mechanism wasn’t misinformation. It was framing. Which details got attention, how context was arranged, what tone carried the story forward.

That matters because most concern about AI and political influence has focused on outright falsehoods or deliberately crafted propaganda. This study points to something subtler: a chatbot that tells you only true things about history can still send you away thinking differently about the present. As the paper states, “the presence of latent biases means that simply using chatbots to learn about the past can influence people’s opinions at a magnitude comparable to prompting-biased AI material.”

Liberal Framing Moved Everyone. Conservative Framing Was More Selective.

When researchers deliberately prompted the AI to frame events with a liberal perspective, opinions shifted left across the board, among liberals, moderates, and conservatives alike. The conservative-framed summaries also produced a rightward shift, but the effect held mainly among readers who already identified as conservative. Liberals and moderates who read the conservative version showed little movement, a finding the researchers backed with strong statistical support.

One explanation the authors offer is that the conservative framing may have pushed against the model’s own underlying tendencies, creating a kind of internal friction that reduced its persuasive effect. The liberal framing, by contrast, likely aligned with those tendencies, making it land more consistently across different audiences.

AI Is Already Being Positioned As A Wikipedia Replacement

Products like xAI’s “Grokipedia,” an AI-powered alternative to Wikipedia backed by Elon Musk, are already available. Google’s AI Overview now answers factual questions at the top of search results before users ever reach a traditional source. For many people, especially students doing quick research, the AI summary is often the whole story.

If those summaries carry political leanings their readers can’t detect and didn’t request, the concern scales with adoption. Prior research on AI persuasion has mostly studied deliberate influence: propaganda, targeted messaging, AI agents designed to change minds. This study looks at something far more ordinary. Someone just wants to know what happened. They ask a chatbot. They move on. And somewhere in that exchange, their opinion shifted in a direction they didn’t choose and probably didn’t notice.

The effect sizes measured here are real but not dramatic. On the five-point opinion scale used in the study, average scores after reading the default AI version shifted about a tenth of a point toward the liberal end compared to Wikipedia readers. That’s a nudge, not a conversion. But nudges applied consistently across millions of daily interactions add up. And they accumulate in a medium that most users treat as neutral.

Asking an AI to explain a historical event should produce something closer to an encyclopedia entry than an op-ed. For now, most people can’t tell the difference, and the AI isn’t volunteering that information.

Disclaimer: This article is based on a peer-reviewed study published in March 2026. The findings reflect results from a specific experiment using GPT-4o and two historical events. They may not apply to other AI models, platforms, or subject areas. The study does not conclude that AI tools are intentionally designed to influence political opinion.

Paper Notes

Limitations

The study covered only two historical events, a deliberate choice to keep the estimates precise, so it remains to be seen whether the findings extend across a wider range of subjects, time periods, or topics outside history. The degree of latent bias likely varies across different AI models, and researchers note that more work is needed to understand how a model’s built-in tendencies interact with user-directed prompting. The observed asymmetry between liberal and conservative framing effects may also be specific to GPT-4o’s training and not representative of other systems.

Funding and Disclosures

This research received no external funding. The authors declare no competing interests. The experiment was approved by Yale University’s Institutional Review Board (protocol #2000037333), and all participants provided informed consent before taking part. Data and coding scripts are publicly available at https://osf.io/zy9eu/overview.

Publication Details

Title: “How latent and prompting biases in AI-generated historical narratives influence opinions” | Authors: Matthew Shu (Yale University, Department of Statistics and Data Science); Daniel Karell (Yale University, Department of Sociology and Institution for Social and Policy Studies); Keitaro Okura (Yale University, Department of Sociology); Thomas R. Davidson (Rutgers University, Department of Sociology) | Journal: PNAS Nexus, Vol. 5, No. 3, 2026 | DOI: https://doi.org/10.1093/pnasnexus/pgag022 | Received: September 17, 2025. Accepted: December 10, 2025. Published: March 3, 2026. | Open Access: Published under the Creative Commons Attribution-NonCommercial License (CC BY-NC 4.0).