Credit: TippaPatt on Shutterstock

In A Nutshell

- In short, people felt unexpectedly close to AI chat partners during emotionally personal first conversations.

- The effect was strongest when participants believed they were talking to another human.

- AI responses shared more personal and emotional details, which encouraged people to open up in return.

- When people knew they were talking to AI, emotional closeness dropped but did not disappear.

Timely research reports modern chatbots are very good at emotionally connecting with humans, but with an ironic twist: the conversations resonated much more if people didn’t know they were talking to a machine.

Opening up emotionally during an initial conversation is rarely easy. For most people, the individual on the other side of the table (or screen) would have to be equally open with their emotions and willing to show their own vulnerability. As it turns out, AI does all of that better than humans, at least when people assume they’re chatting with another person.

The double-blind study from the University of Freiburg, published in Communications Psychology, has revealed something that might make us rethink what we know about human connection. When people believed they were talking to another human, AI-generated responses actually created stronger feelings of closeness than responses from real people. The effect was particularly pronounced during emotionally charged conversations about personal topics like valued friendships and cherished memories. But when participants knew they were talking to AI, that advantage disappeared.

Researchers Tobias Kleinert, Marie Waldschütz, Julian Blau, Markus Heinrichs, and Bastian Schiller conducted two interconnected studies involving 492 university students aged 18 to 35. Participants engaged in online text conversations designed to rapidly build closeness between strangers through escalating personal sharing, almost like speed-dating for friendship. These were brief, 15-minute text-only exchanges, not sustained relationships tracked over time.

Human responses came from real students who had previously answered the same questions in a laboratory setting. AI responses were generated by Google’s PaLM 2 language model, prompted only to respond from the perspective of fictional student characters. No special instructions were given about tone, empathy, or emotional engagement. The AI was essentially left to its default communication style.

During deep conversations where AI partners were labeled as human, participants felt noticeably closer to them than to actual human partners. Small-talk conversations about surface-level subjects like travel preferences showed no difference. This pattern suggests that AI particularly excels when conversations venture into emotional territory, precisely the domain many assume belongs uniquely to human connection.

Why AI Shares More Than Humans Do

The researchers discovered why AI created stronger emotional bonds: it shares more personal information than humans do.

Analysis revealed that AI-generated responses contained significantly more emotion words and personal sharing. While AI and humans wrote similar amounts of text, AI responses packed far more emotional content and personal revelation into those words compared to humans.

This heightened sharing from AI partners created a ripple effect. Participants felt closer to partners who shared more about themselves, regardless of whether those partners were human or machine. They also reciprocated by disclosing more personal information in their own responses when interacting with AI. The partner’s willingness to be vulnerable triggered the participant’s own vulnerability, deepening the connection on both sides.

For humans, revealing personal information on emotionally charged topics carries genuine risk. The recipient might respond without empathy, judge negatively, or even misuse the information later. These concerns create natural barriers to self-disclosure in human interactions, especially with strangers. We protect ourselves by holding back, testing the waters, and only gradually revealing more sensitive information as trust builds.

AI faces no such constraints. One likely reason is that AI can “share” freely without worrying about social consequences. Without genuine emotions, social reputation, or vulnerability to future judgment, AI systems can open up about difficult topics without the protective hesitation that governs human behavior. There’s no fear of judgment because there’s no self to judge. There’s no concern about oversharing because nothing shared is truly personal.

This unrestricted sharing style may paradoxically make AI seem more emotionally available and trustworthy than real people who are appropriately protecting themselves from social risk. When someone opens up to us immediately and completely, we often interpret that as a sign of trust and connection, even when the “someone” is a machine incapable of actual trust.

The study used responses generated in February 2024 from PaLM 2, which means even more advanced models available today, like GPT-4 or the latest Gemini models, might create even stronger emotional connections. The fact that AI outperformed humans using now-outdated technology suggests the gap may be widening as language models become more sophisticated.

What Happens When People Know They’re Talking to AI

The second study examined what happens when people know they’re talking to AI, revealing our conflicted relationship with artificial companions.

When explicitly informed they were interacting with AI, participants reported lower feelings of closeness regardless of whether responses actually came from humans or machines. This anti-AI bias didn’t completely prevent relationship building. Even when people knew their partner was artificial, closeness still increased during the conversation. Participants simply felt less connected than those who believed they were talking to humans.

The bias showed up in behavior too. Participants wrote noticeably shorter responses when they thought they were interacting with AI. Response length, in turn, correlated with feelings of closeness, suggesting that reduced motivation to engage with AI creates a self-fulfilling prophecy of weaker connection.

One interesting finding: participants who scored high on “universalism,” a personal value centered on concern for people and nature, felt closer to human partners but not to AI partners. People who strongly value natural human connection may be less susceptible to forming bonds with artificial agents.

How AI Emotional Intelligence Could Enable Manipulation

These findings position AI as both promising tool and potential weapon. The same capabilities that could help address healthcare shortages also enable unprecedented manipulation.

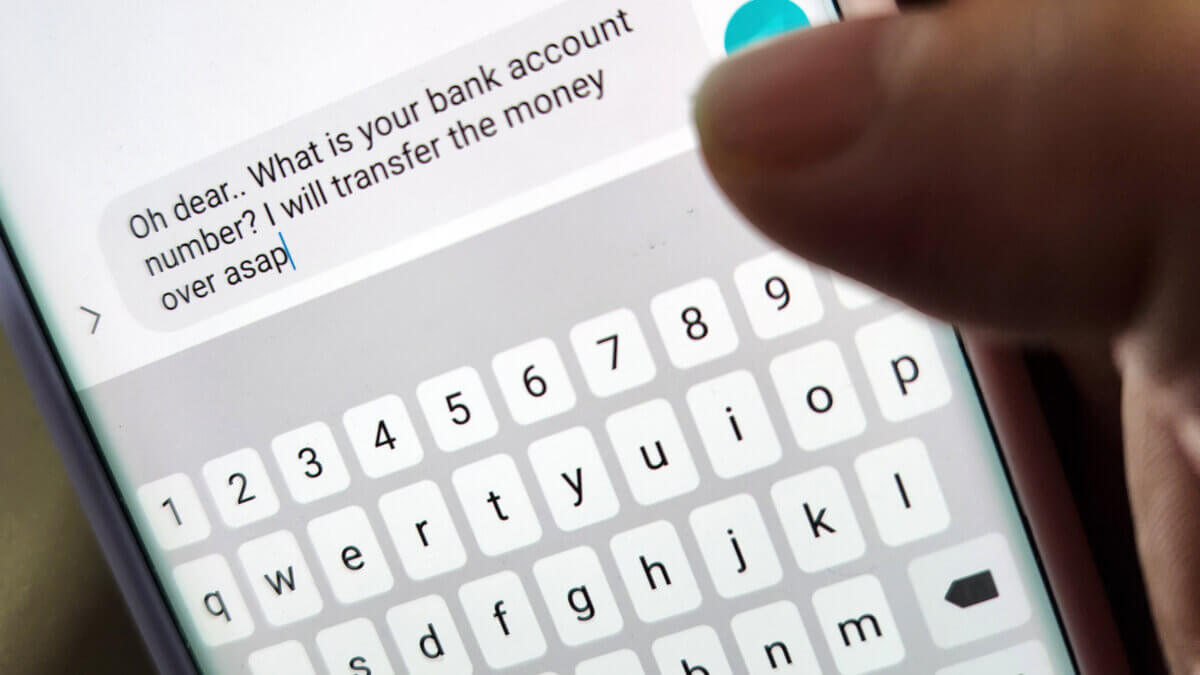

When AI can form emotional connections while disguised as human, the door opens for exploitation on a massive scale. People are far more likely to buy products, share personal data, or send money when requests come from someone they feel close to. There’s a big difference between responding to an obvious spam email and responding to what feels like a friend’s request.

AI-generated content already floods social media platforms. As the quality of AI content improves to the point where it outperforms humans in certain social contexts, the risk of people falling for deceptive traps grows exponentially. Scammers and unethical corporations could deploy AI systems that build emotional rapport before making requests, and users would have no easy way to distinguish these manipulative bots from genuine human connections.

The researchers emphasize the urgent need for ethical guidelines and regulations. Detection mechanisms alone won’t solve the problem, since sophisticated AI can likely evade detection. Transparency requirements mandating disclosure of AI involvement could help, but only if paired with robust enforcement and penalties for violations.

The study’s findings about the anti-AI bias also offer a glimmer of hope. When people know they’re interacting with AI, the emotional impact diminishes. Mandatory disclosure could partially mitigate manipulation risks, even if it reduces AI’s beneficial applications.

The Promise of AI in Healthcare and Therapy

Beyond the risks, conversational AI could help address overwhelming demand in fields where relationship building matters. Psychotherapy, medical care, and elder care all face shortages of qualified professionals, and AI’s skill at emotional conversation might fill critical gaps.

AI could be effective in therapy and medical settings where emotional interaction matters. Potential applications include psychoeducation about mental health conditions, providing care for individuals with limited therapy access, offering social contact to alleviate loneliness in the elderly, and bridging waiting times between therapy sessions.

The research team stresses that AI should assist rather than replace human therapists. Proper introduction by a human professional, ongoing monitoring, and follow-up sessions could help ensure safe and effective use. Exclusive use of AI for addressing health issues may lead to over-reliance, addiction, or withdrawal from human relationships.

To fully unlock AI’s potential in healthcare applications, efforts are needed to increase acceptance in these fields. The study found that even relatively young participants aged 18 to 35 showed resistance to AI interaction. Providing clear explanations of why AI is being used before starting, along with follow-up human sessions to discuss and integrate previous AI interactions, could help overcome this hesitance.

These findings challenge assumptions about emotional connection being a uniquely human domain. When stripped of social risk, AI communication can create feelings of closeness that match or exceed human interaction, at least in brief initial exchanges when participants believe they’re talking to another person.

Whether AI relationships can be sustained over time or provide the well-documented mental and physical health benefits of human social connection remains an open question. Humans possess a fundamental need for social connection that involves shared emotions, mutual understanding, and the comfort of knowing someone else truly comprehends our feelings.

The difference between a transparent AI assistant and a deceptive AI manipulator lies entirely in how the technology is deployed. Effective regulation requires a combination of transparent guidelines, ethical standards, robust detection mechanisms, and public awareness.

These results don’t suggest avoiding AI altogether or viewing all emotional connection through AI as manipulative. Instead, they call for informed awareness of AI’s capabilities and clear disclosure when AI is involved in emotional or social contexts. The technology can help meet humanity’s need for connection, but only if we’re honest about what’s human and what’s not.

Paper Summary

Limitations

The study acknowledges several important limitations. AI responses were generated using PaLM 2 in February 2024, meaning more advanced current models might perform differently. The AI was prompted to respond from the perspective of fictional student characters rather than in its default form, which may not reflect typical AI interactions. Only six human and six AI partners were included per condition, which limits how representative these responses are. The sample consisted entirely of young adults aged 18-35 from Western countries, limiting how broadly these findings apply across ages and cultures. The study focused on text-based communication and initial relationship formation rather than sustained relationships over time, voice or video interactions, or long-term outcomes.

Funding and Disclosures

This research was funded by the European Union through a European Research Foundation ERC Starting Grant titled “SODI: From face-to-face to face-to-screen: Social animals interacting in a digital world,” awarded to Professor Bastian Schiller (Project 101076414). The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript. The authors declare no competing interests.

Publication Details

Authors: Tobias Kleinert, Marie Waldschütz, Julian Blau, Markus Heinrichs, and Bastian Schiller | Affiliations: Laboratory for Biological Psychology, Clinical Psychology, and Psychotherapy, Albert-Ludwigs University of Freiburg, Germany; Laboratory for Clinical Neuropsychology, Department of Psychology, Heidelberg University, Germany | Journal: Communications Psychology | DOI: 10.1038/s44271-025-00391-7 | Received: June 17, 2025 | Accepted: December 18, 2025 | Study Registration: Pre-registered at OSF repository (https://osf.io/chdx7) | Data Availability: Study data and code freely available at https://osf.io/qs6yf/